Using a novel microscopy technique, MIT and Brigham and Women’s Hospital/Harvard Medical School researchers have imaged human brain tissue in greater detail than ever before, revealing cells and structures that were not previously visible.

Among their findings, the researchers discovered that some “low-grade” brain tumors contain more putative aggressive tumor cells than expected, suggesting that some of these tumors may be more aggressive than previously thought.

The researchers hope that this technique could eventually be deployed to diagnose tumors, generate more accurate prognoses, and help doctors choose treatments.

“We’re starting to see how important the interactions of neurons and synapses with the surrounding brain are to the growth and progression of tumors. A lot of those things we really couldn’t see with conventional tools, but now we have a tool to look at those tissues at the nanoscale and try to understand these interactions,” says Pablo Valdes, a former MIT postdoc who is now an assistant professor of neuroscience at the University of Texas Medical Branch and the lead author of the study.

Edward Boyden, the Y. Eva Tan Professor in Neurotechnology at MIT; a professor of biological engineering, media arts and sciences, and brain and cognitive sciences; a Howard Hughes Medical Institute investigator; and a member of MIT’s McGovern Institute for Brain Research and Koch Institute for Integrative Cancer Research; and E. Antonio Chiocca, a professor of neurosurgery at Harvard Medical School and chair of neurosurgery at Brigham and Women’s Hospital, are the senior authors of the study, which appears today in Science Translational Medicine.

Making molecules visible

The new imaging method is based on expansion microscopy, a technique developed in Boyden’s lab in 2015 based on a simple premise: Instead of using powerful, expensive microscopes to obtain high-resolution images, the researchers devised a way to expand the tissue itself, allowing it to be imaged at very high resolution with a regular light microscope.

The technique works by embedding the tissue into a polymer that swells when water is added, and then softening up and breaking apart the proteins that normally hold tissue together. Then, adding water swells the polymer, pulling all the proteins apart from each other. This tissue enlargement allows researchers to obtain images with a resolution of around 70 nanometers, which was previously possible only with very specialized and expensive microscopes such as scanning electron microscopes.

In 2017, the Boyden lab developed a way to expand preserved human tissue specimens, but the chemical reagents that they used also destroyed the proteins that the researchers were interested in labeling. By labeling the proteins with fluorescent antibodies before expansion, the proteins’ location and identity could be visualized after the expansion process was complete. However, the antibodies typically used for this kind of labeling can’t easily squeeze through densely packed tissue before it’s expanded.

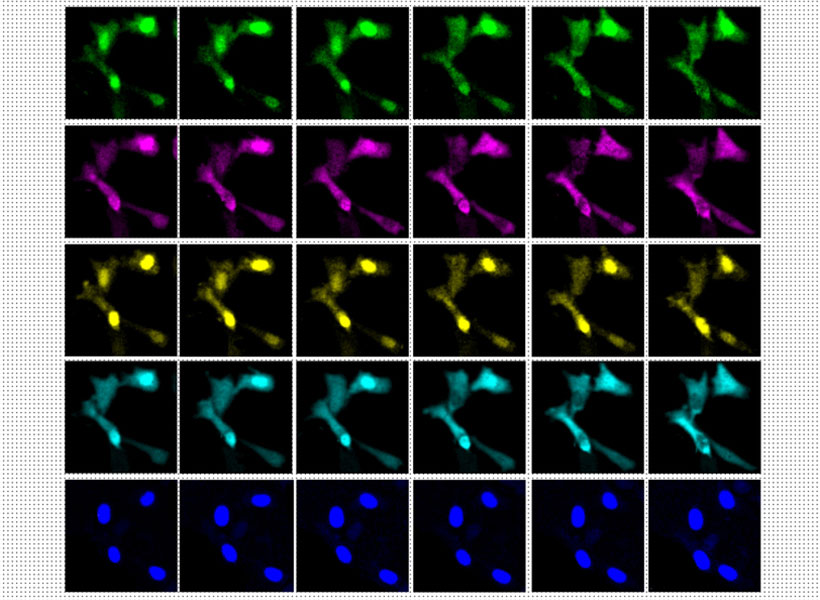

So, for this study, the authors devised a different tissue-softening protocol that breaks up the tissue but preserves proteins in the sample. After the tissue is expanded, proteins can be labelled with commercially available fluorescent antibodies. The researchers then can perform several rounds of imaging, with three or four different proteins labeled in each round. This labeling of proteins enables many more structures to be imaged, because once the tissue is expanded, antibodies can squeeze through and label proteins they couldn’t previously reach.

The technique works by embedding the tissue into a polymer that swells when water is added, and then softening up and breaking apart the proteins that normally hold tissue together.

“We open up the space between the proteins so that we can get antibodies into crowded spaces that we couldn’t otherwise,” Valdes says. “We saw that we could expand the tissue, we could decrowd the proteins, and we could image many, many proteins in the same tissue by doing multiple rounds of staining.”

Working with MIT Assistant Professor Deblina Sarkar, the researchers demonstrated a form of this “decrowding” in 2022 using mouse tissue.

The new study resulted in a decrowding technique for use with human brain tissue samples that are used in clinical settings for pathological diagnosis and to guide treatment decisions. These samples can be more difficult to work with because they are usually embedded in paraffin and treated with other chemicals that need to be broken down before the tissue can be expanded.

In this study, the researchers labeled up to 16 different molecules per tissue sample. The molecules they targeted include markers for a variety of structures, including axons and synapses, as well as markers that identify cell types such as astrocytes and cells that form blood vessels. They also labeled molecules linked to tumor aggressiveness and neurodegeneration.

Using this approach, the researchers analyzed healthy brain tissue, along with samples from patients with two types of glioma — high-grade glioblastoma, which is the most aggressive primary brain tumor, with a poor prognosis, and low-grade gliomas, which are considered less aggressive.

“We wanted to look at brain tumors so that we can understand them better at the nanoscale level, and by doing that, to be able to develop better treatments and diagnoses in the future. At this point, it was more developing a tool to be able to understand them better, because currently in neuro-oncology, people haven’t done much in terms of super-resolution imaging,” Valdes says.

A diagnostic tool

To identify aggressive tumor cells in gliomas they studied, the researchers labeled vimentin, a protein that is found in highly aggressive glioblastomas. To their surprise, they found many more vimentin-expressing tumor cells in low-grade gliomas than had been seen using any other method.

“This tells us something about the biology of these tumors, specifically, how some of them probably have a more aggressive nature than you would suspect by doing standard staining techniques,” Valdes says.

When glioma patients undergo surgery, tumor samples are preserved and analyzed using immunohistochemistry staining, which can reveal certain markers of aggressiveness, including some of the markers analyzed in this study.

“These are incurable brain cancers, and this type of discovery will allow us to figure out which cancer molecules to target so we can design better treatments. It also proves the profound impact of having clinicians like us at the Brigham and Women’s interacting with basic scientists such as Ed Boyden at MIT to discover new technologies that can improve patient lives,” Chiocca says.

The researchers hope their expansion microscopy technique could allow doctors to learn much more about patients’ tumors, helping them to determine how aggressive the tumor is and guiding treatment choices. Valdes now plans to do a larger study of tumor types to try to establish diagnostic guidelines based on the tumor traits that can be revealed using this technique.

“Our hope is that this is going to be a diagnostic tool to pick up marker cells, interactions, and so on, that we couldn’t before,” he says. “It’s a practical tool that will help the clinical world of neuro-oncology and neuropathology look at neurological diseases at the nanoscale like never before, because fundamentally it’s a very simple tool to use.”

Boyden’s lab also plans to use this technique to study other aspects of brain function, in healthy and diseased tissue.

“Being able to do nanoimaging is important because biology is about nanoscale things — genes, gene products, biomolecules — and they interact over nanoscale distances,” Boyden says. “We can study all sorts of nanoscale interactions, including synaptic changes, immune interactions, and changes that occur during cancer and aging.”

The research was funded by K. Lisa Yang, the Howard Hughes Medical Institute, John Doerr, Open Philanthropy, the Bill and Melinda Gates Foundation, the Koch Institute Frontier Research Program, the National Institutes of Health, and the Neurosurgery Research and Education Foundation.