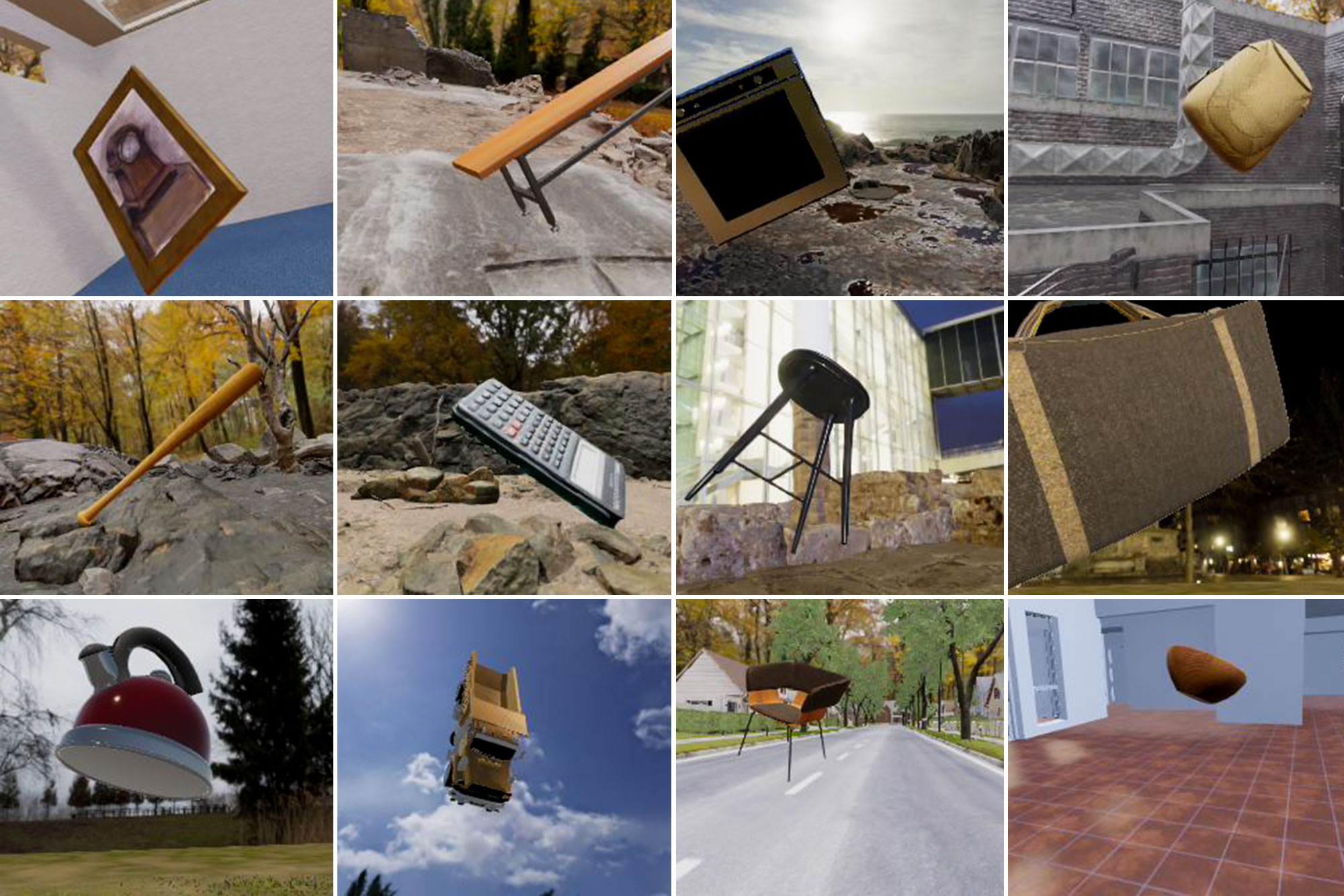

We take for granted our ability to recognize vast numbers of objects rapidly and effortlessly, but this ability is based on a complex network of brain regions. DiCarlo is interested in how this remarkable system works. Our visual system enables us to tell within a fraction of a second whether, for example, a visual scene contains a dog, despite the fact that no two dogs are exactly alike and that the dog’s image on the retina is constantly changing depending on its location, size, pose, and illumination. Somehow, our brains create a representation of “dog-ness” that allows us to recognize an unfamiliar dog based on prior experiences with other dogs. We learn thousands of such categories in early childhood, and we continue to acquire them throughout life.

Using electrophysiological recordings from animals and neuroimaging techniques with animal and human subjects, DiCarlo is studying the patterns of brain activity that underlie our ability to recognize visual objects. In collaboration with McGovern colleague Nancy Kanwisher, DiCarlo has shown that the highest stage of this ventral stream – the inferior temporal (IT) cortex – contains clusters of neurons that respond to similar types of objects. DiCarlo has shown that the brain’s ability to recognize objects under different conditions is altered by experience. As we gain experience with visual objects, the activity of IT neurons and our perception of objects change – pointing to how the ventral stream might “learn” to represent objects in the first place. DiCarlo believes that this ventral stream transforms pixel-based images of the world into patterns of nerve activity that emphasize object identity and discount potentially confusing variables like the object’s position and size.

Jim DiCarlo joined the McGovern Institute in 2002, and is currently the Peter de Florez Professor of Brain and Cognitive Sciences as well as Director of the MIT Quest for Intelligence. For nearly nine years, DiCarlo was also the head of MIT’s Brain and Cognitive Sciences Department. He received his MD and PhD in Biomedical Engineering from Johns Hopkins University in 1998 and did his postdoctoral work at Baylor College of Medicine from 1998 to 2002. He is a past recipient of a Sloan fellowship, a Pew Scholar Award, and a McKnight Scholar Award.

Member, American Academy of Arts and Sciences

McKnight Scholar Award in Neuroscience, 2006-2009

MIT School of Science Prize for Excellence in Teaching, 2005

Alfred P. Sloan Research Fellow, 2002

Pew Scholar Award in the Biomedical Sciences, 2002-2006

MIT School of Science Prize for Excellence in Teaching, 2005