When doctors and scientists want to see inside a body, magnetic resonance imaging (MRI) is a powerful tool. MRI can noninvasively capture detailed images of the body’s muscles, organs, and bones. It can monitor blood flow to generate a map of brain activity. And with new sensors developed by bioengineers at MIT, MRI can track the kinds of molecules that make our brains and bodies work.

In the May 13, 2026, issue of the journal Nature Biomedical Engineering, a team led by Alan Jasanoff, the Eugene McDermott Professor in the Brain Sciences and Human Behavior at MIT reports on their new sensors, which can brighten or dim MRI signals in response to specific molecular targets. The probes are designed to amplify the effect that each target molecule has on MRI signal, dramatically improving sensitivity over previous small-molecule sensors. Jasanoff, who is also an associate investigator at the McGovern Institute for Brain Research, says the approach his team used should enable the development of MRI sensors that detect neurotransmitters and other important molecules in the brain.

“We want to be able to measure distinct chemical signals like neurotransmitters, neuropeptides, and metabolites as they fluctuate across the whole brain,” Jasanoff says.

“These chemicals are important ingredients in neural computations, and we want to use the types of probes that we developed to detect these signals dynamically.”

Engineered nanoparticles

Jasanoff explains that researchers have struggled to use MRI to sensitively detect small molecules in the brain because the amount of any given neurochemical is low. Sensors can be designed to change the brightness of an MRI signal in the presence of specific molecules—but it takes a lot of contrast agent to achieve this. If every molecule of contrast agent needs its own target molecule to activate it, low concentrations of the target molecule limit the sensors’ visibility in an MRI scan. “The signal change that you see in the imaging will be very modest,” Jasanoff says. “It won’t let us detect physiological events.”

The Jasanoff team’s new sensors, whose development was led by postdoctoral researcher Sayani Das and graduate student Jacob Cyert Simon, overcome this problem. To generate a greater signal change in response to target molecules, the researchers designed probes in which a single target molecule impacts not one contrast agent, but many.

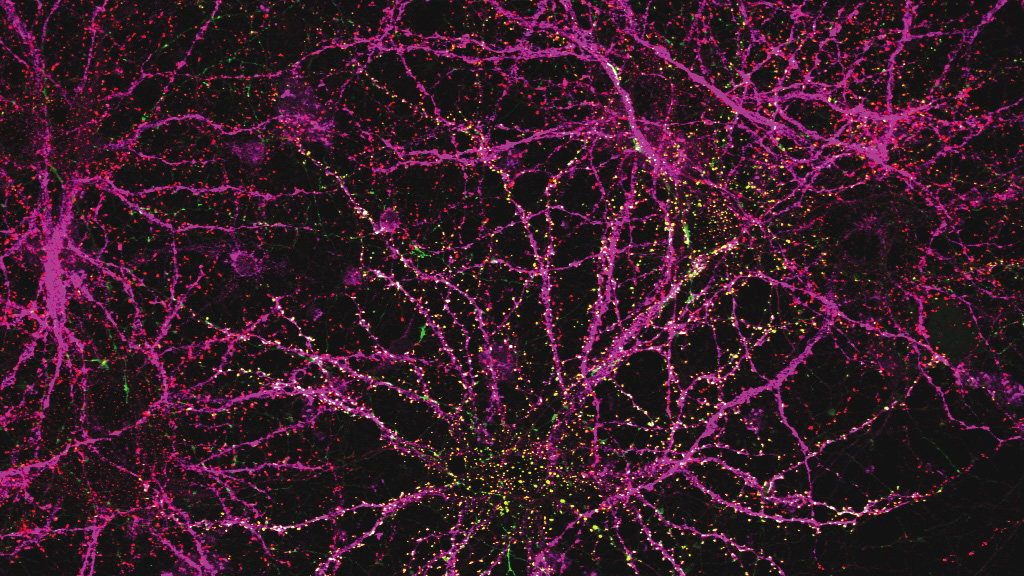

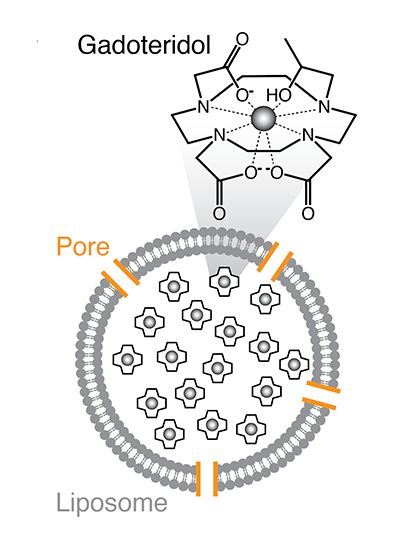

To achieve this, Das and Simon packaged an MRI contrast agent inside tiny sacs called liposomal nanoparticles. Each nanoparticle is packed with many molecules of gadolinium, a magnetic material that brightens the MRI signal that arises from hydrogen atoms in water. Inside their protective sacs, gadolinium has no effect on MRI signal, unless water molecules can easily get in and out.

Das and Simon built water channels into the walls of their gadolinium-filled nanoparticles, engineering them so that their opening depends on the presence or absence of a target molecule. When the channels open, more water enters and the gadolinium brightens the local MRI signal, lighting up that spot in a scan.

The researchers call their target-responsive sensors liposomal nanoparticle reporters, or LisNRs (pronounced “listeners”). They designed LisNRs that let water in only in the presence of their target molecule. The water channels in these nanoparticles stay blocked until they encounter their target, which can knock aside a channel-blocking bit of protein. Once the channel blocker is displaced, water enters and MRI signal brightens. They also made LisNRs that dim the MRI signal in the presence of the molecule they are designed to detect. These have a channel that stays open until the target molecule comes along and blocks it, keeping water out. Jasanoff lab members Vinay Sharma, Samira Abozeid, and Gregory Thiabaud played key roles in understanding and optimizing these interactions, and collaborators in the laboratory of Masayuki Inoue at the University of Tokyo helped the group engineer channels with higher potency.

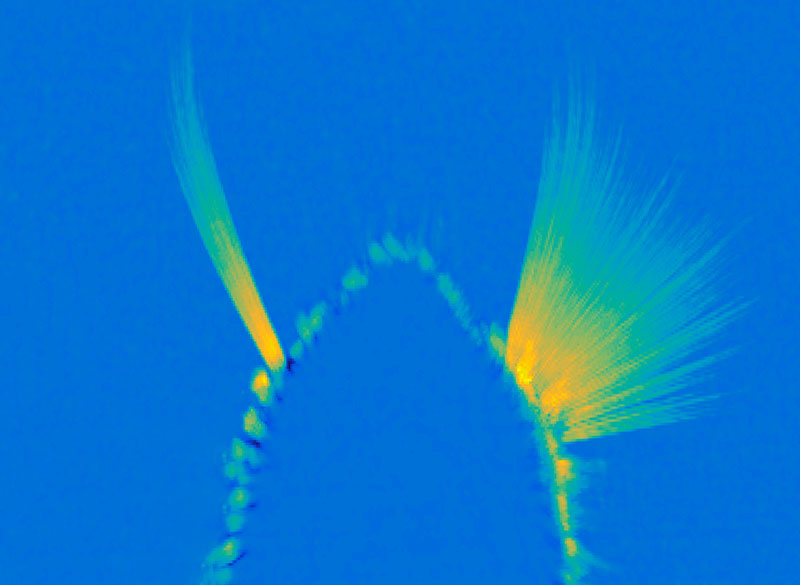

In experiments led by postdoctoral researcher Miranda Dawson, Jasanoff’s team used their LisNRs to detect a molecule called biotin in the brains and bodies of living rats, illustrating the probe’s amplifying effects. “We showed that we could detect micromolar-scale levels of biotin with about tenfold greater sensitivity than we would have if we’d used a more conventional, one-to-one type sensing approach,” Jasanoff says. He adds that the team’s modeling suggests that with further development, they may be able to achieve even greater sensitivity gains.

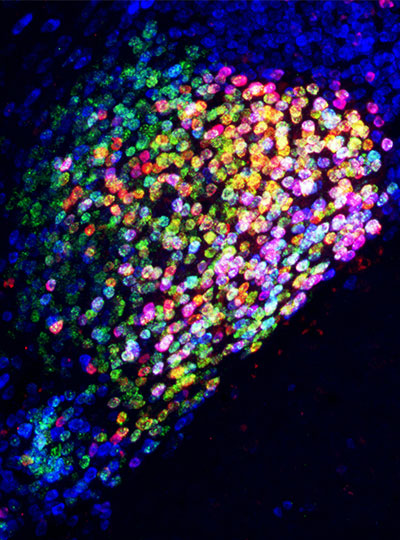

The group showed that the new sensors can be delivered systemically, reaching various organs and spreading throughout the brain. This makes them promising tools for brain-wide imaging, as well as imaging targets in the peripheral nervous system or other tissues.

A next step will be engineering LisNRs that respond to the specific neurochemicals that Jasanoff and his team hope to study. “There are something like 100 neurochemicals in the brain that we’d love to detect in principle,” he says. They’ll start with dopamine and glutamate—two important and relatively abundant molecules that mediate communications between neurons.

This research, including support for postdoctoral fellows and graduate students involved in the work, was funded in part by Lore Harp McGovern, Yang Tan Collective at MIT, K. Lisa Yang Brain-Body Center at MIT, Hock E. Tan and K. Lisa Yang Center for Autism Research at MIT, and K. Lisa Yang and Hock E. Tan Center for Molecular Therapeutics at MIT.