How motion conveys emotion in the face

Avatars reveal how the brain uses subtle facial movements to understand social cues.

While a static emoji can stand in for emotion, in real life we are constantly reading into the feelings of others through subtle facial movements. The lift of an eyebrow, the flicker around the lips as a smile emerges, a subtle change around the eyes (or the sudden rolling of the eyes), are all changes that feed into our ability to understand the emotional state, and the attitude, of others towards us. Ben Deen and Rebecca Saxe have now monitored changes in brain activity as subjects followed face movements in movies of avatars. Their findings argue that we can generalize across individual face part movements in other people, but that a particular cortical region, the face-responsive superior temporal sulcus (fSTS), is also responding to isolated movements of individual face parts. Indeed, the fSTS seems to be tied to kinematics, individual face part movement, more than the implied emotional cause of that movement.

We know that the brain responds to dynamic changes in facial expression, and that these are associated with activity in the fSTS, but how do calculations of these movements play out in the brain?

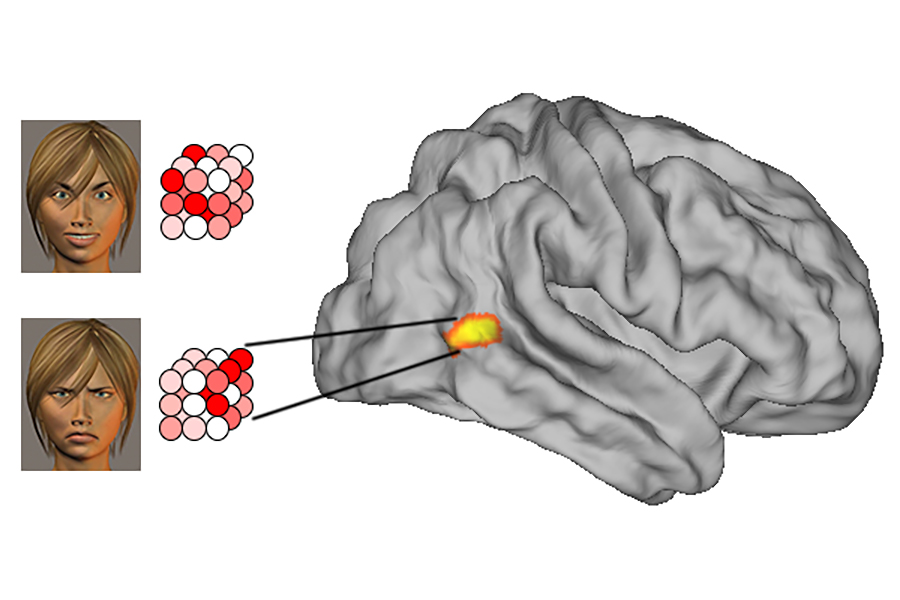

Do we understand emotional changes by adding up individual features (lifting of eyebrows + rounding of mouth= surprise), or are we assessing the entire face in a more holistic way that results in more generalized representations? McGovern Investigator Rebecca Saxe and her graduate student Ben Deen set out to answer this question using behavioral analysis and brain imaging, specifically fMRI.

“We had a good sense of what stimuli the fSTS responds strongly to,” explains Ben Deen, “but didn’t really have any sense of how those inputs are processed in the region – what sorts of features are represented, whether the representation is more abstract or more tied to visual features, etc. The hope was to use multivoxel pattern analysis, which has proven to be a remarkably useful method for characterizing representational content, to address these questions and get a better sense of what the region is actually doing.”

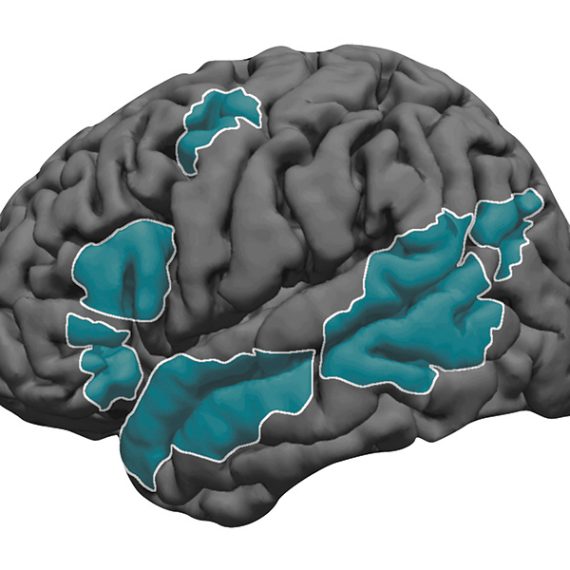

Facial movements were conveyed to subjects using animated “avatars.” By presenting avatars that made isolated eye and eyebrow movements (brow raise, eye closing, eye roll, scowl) or mouth movements (smile, frown, mouth opening, snarl), as well as composites of these movements, the researchers were able to assess whether our interpretation of the latter is distinct from the sum of its parts. To do this, Deen and Saxe first took a behavioral approach where people reported on what combinations of eye and mouth movements in a whole avatar face, or one where the top and bottom parts of the face were misaligned. What they found was that movement in the mouth region can influence perception of movement in the eye region, arguably due to some level of holistic processing. The authors then asked whether there were cortical differences upon viewing isolated vs. combined face part movements. They found that changes in fSTS, but not other brain regions, had patterns of activity that seemed to discriminate between different facial movements. Indeed, they could decode which part of the avatar’s face is being perceived as moving from fSTS activity. The researchers could even model the fSTS response to combined features linearly based on the response to individual face parts. In short, though the behavorial data indicate that there is holistic processing of complex facial movement, it is also clear that isolated parts-based representations are also present, a sort of intermediate state.

As part of this work, Deen and Saxe took the important step of pre-registering their experimental parameters, before collecting any data, at the Open Science Framework. This step allows others to more easily reproduce the analysis they conducted, since all parameters (the task that subjects are carrying out, the number of subjects needed, the rationale for this number, and the scripts used to analyze data) are openly available.

“Preregistration had a big impact on our workflow for the study,” explained Deen. “More of the work was done up front, in coming up with all of the analysis details and agonizing over whether we were choosing the right strategy, before seeing any of the data. When you tie your hands by making these decisions up front, you start thinking much more carefully about them.”

Pre-registration does remove post-hoc researcher subjectivity from the analysis. As an example, because Deen and Saxe predicted that the people would be accurately able to discriminate between faces per se, they decided ahead of the experiment to focus on analyzing reaction time, rather than looking at the collected data and deciding to focus on this number after the fact. This adds to the overall objectivity of the experiment and is increasingly seen as a robust way to conduct such experiments.