How the brain encodes landmarks that help us navigate

Neuroscientists discover how a key brain region combines visual and spatial information to help us find our way.

When we move through the streets of our neighborhood, we often use familiar landmarks to help us navigate. And as we think to ourselves, “OK, now make a left at the coffee shop,” a part of the brain called the retrosplenial cortex (RSC) lights up.

While many studies have linked this brain region with landmark-based navigation, exactly how it helps us find our way is not well-understood. A new study from MIT neuroscientists now reveals how neurons in the RSC use both visual and spatial information to encode specific landmarks.

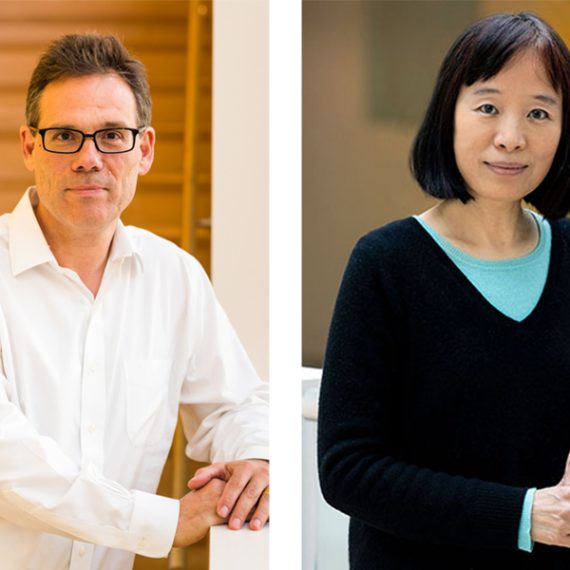

“There’s a synthesis of some of these signals — visual inputs and body motion — to represent concepts like landmarks,” says Mark Harnett, an assistant professor of brain and cognitive sciences and a member of MIT’s McGovern Institute for Brain Research. “What we went after in this study is the neuron-level and population-level representation of these different aspects of spatial navigation.”

In a study of mice, the researchers found that this brain region creates a “landmark code” by combining visual information about the surrounding environment with spatial feedback of the mice’s own position along a track. Integrating these two sources of information allowed the mice to learn where to find a reward, based on landmarks that they saw.

“We believe that this code that we found, which is really locked to the landmarks, and also gives the animals a way to discriminate between landmarks, contributes to the animals’ ability to use those landmarks to find rewards,” says Lukas Fischer, an MIT postdoc and the lead author of the study.

Harnett is the senior author of the study, which appears today in the journal eLife. Other authors are graduate student Raul Mojica Soto-Albors and recent MIT graduate Friederike Buck.

Encoding landmarks

Previous studies have found that people with damage to the RSC have trouble finding their way from one place to another, even though they can still recognize their surroundings. The RSC is also one of the first areas affected in Alzheimer’s patients, who often have trouble navigating.

The RSC is wedged between the primary visual cortex and the motor cortex, and it receives input from both of those areas. It also appears to be involved in combining two types of representations of space — allocentric, meaning the relationship of objects to each other, and egocentric, meaning the relationship of objects to the viewer.

“The evidence suggests that RSC is really a place where you have a fusion of these different frames of reference,” Harnett says. “Things look different when I move around in the room, but that’s because my vantage point has changed. They’re not changing with respect to one another.”

In this study, the MIT team set out to analyze the behavior of individual RSC neurons in mice, including how they integrate multiple inputs that help with navigation. To do that, they created a virtual reality environment for the mice by allowing them to run on a treadmill while they watch a video screen that makes it appear they are running along a track. The speed of the video is determined by how fast the mice run.

At specific points along the track, landmarks appear, signaling that there’s a reward available a certain distance beyond the landmark. The mice had to learn to distinguish between two different landmarks, and to learn how far beyond each one they had to run to get the reward.

Once the mice learned the task, the researchers recorded neural activity in the RSC as the animals ran along the virtual track. They were able to record from a few hundred neurons at a time, and found that most of them anchored their activity to a specific aspect of the task.

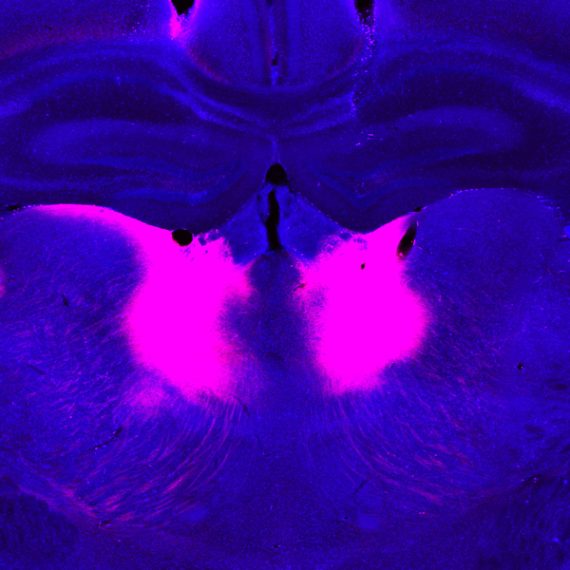

There were three primary anchoring points: the beginning of the trial, the landmark, and the reward point. The majority of the neurons were anchored to the landmarks, meaning that their activity would consistently peak at a specific point relative to the landmark, say 50 centimeters before it or 20 centimeters after it.

Most of those neurons responded to both of the landmarks, but a small subset responded to only one or the other. The researchers hypothesize that those strongly selective neurons help the mice to distinguish between the landmarks and run the correct distance to get the reward.

When the researchers used optogenetics (a tool that can turn off neuron activity) to block activity in the RSC, the mice’s performance on the task became much worse.

Combining inputs

The researchers also did an experiment in which the mice could choose to run or not while the video played at a constant speed, unrelated to the mice’s movement. The mice could still see the landmarks, but the location of the landmarks was no longer linked to a reward or to the animals’ own behavior. In that situation, RSC neurons did respond to the landmarks, but not as strongly as they did when the mice were using them for navigation.

Further experiments allowed the researchers to tease out just how much neuron activation is produced by visual input (seeing the landmarks) and by feedback on the mouse’s own movement. However, simply adding those two numbers yielded totals much lower than the neuron activity seen when the mice were actively navigating the track.

“We believe that is evidence for a mechanism of nonlinear integration of these inputs, where they get combined in a way that creates a larger response than what you would get if you just added up those two inputs in a linear fashion,” Fischer says.

The researchers now plan to analyze data that they have already collected on how neuron activity evolves over time as the mice learn the task. They also hope to perform further experiments in which they could try to separately measure visual and spatial inputs into different locations within RSC neurons.

The research was funded by the National Institutes of Health, the McGovern Institute, the NEC Corporation Fund for Research in Computers and Communications at MIT, and the Klingenstein-Simons Fellowship in Neuroscience.

Paper: "Representation of visual landmarks in retrosplenial cortex "