This story is adapted from a January 27, 2021 press release from Massachusetts General Hospital.

The ability to understand others’ hidden thoughts and beliefs is an essential component of human social behavior. Now, neuroscientists have for the first time identified specific neurons critical for social reasoning, a cognitive process that requires individuals to acknowledge and predict others’ hidden beliefs and thoughts.

The findings, published in Nature, open new avenues of study into disorders that affect social behavior, according to the authors.

In the study, a team of Harvard Medical School investigators based at Massachusetts General Hospital and colleagues from MIT took a rare look at how individual neurons represent the beliefs of others. They did so by recording neuron activity in patients undergoing neurosurgery to alleviate symptoms of motor disorders such as Parkinson’s disease.

Theory of mind

The researcher team, which included McGovern scientists Ev Fedorenko and Rebecca Saxe, focused on a complex social cognitive process called “theory of mind.” To illustrate this, let’s say a friend appears to be sad on her birthday. One may infer she is sad because she didn’t get a present or she is upset at growing older.

“When we interact, we must be able to form predictions about another person’s unstated intentions and thoughts,” said senior author Ziv Williams, HMS associate professor of neurosurgery at Mass General. “This ability requires us to paint a mental picture of someone’s beliefs, which involves acknowledging that those beliefs may be different from our own and assessing whether they are true or false.”

This social reasoning process develops during early childhood and is fundamental to successful social behavior. Individuals with autism, schizophrenia, bipolar affective disorder, and traumatic brain injuries are believed to have a deficit of theory-of-mind ability.

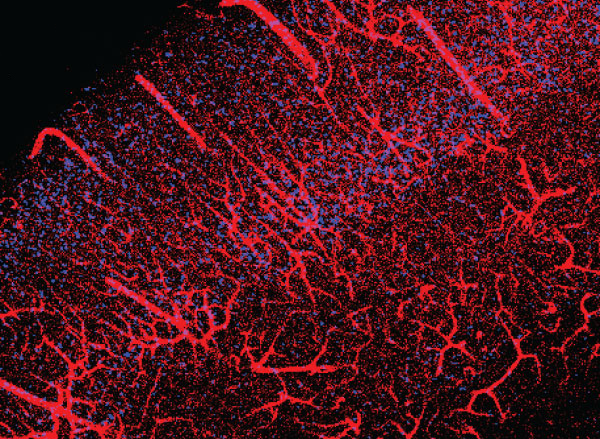

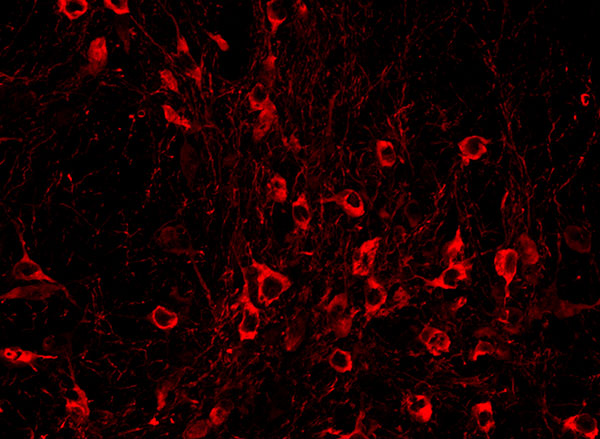

For the study, 15 patients agreed to perform brief behavioral tasks before undergoing neurosurgery for placement of deep-brain stimulation for motor disorders. Microelectrodes inserted into the dorsomedial prefrontal cortex recorded the behavior of individual neurons as patients listened to short narratives and answered questions about them.

For example, participants were presented with the following scenario to evaluate how they considered another’s belief of reality: “You and Tom see a jar on the table. After Tom leaves, you move the jar to a cabinet. Where does Tom believe the jar to be?”

Social computation

The participants had to make inferences about another’s beliefs after hearing each story. The experiment did not change the planned surgical approach or alter clinical care.

“Our study provides evidence to support theory of mind by individual neurons,” said study first author Mohsen Jamali, HMS instructor in neurosurgery at Mass General. “Until now, it wasn’t clear whether or how neurons were able to perform these social cognitive computations.”

The investigators found that some neurons are specialized and respond only when assessing another’s belief as false, for example. Other neurons encode information to distinguish one person’s beliefs from another’s. Still other neurons create a representation of a specific item, such as a cup or food item, mentioned in the story. Some neurons may multitask and aren’t dedicated solely to social reasoning.

“Each neuron is encoding different bits of information,” Jamali said. “By combining the computations of all the neurons, you get a very detailed representation of the contents of another’s beliefs and an accurate prediction of whether they are true or false.”

Now that scientists understand the basic cellular mechanism that underlies human theory of mind, they have an operational framework to begin investigating disorders in which social behavior is affected, according to Williams.

“Understanding social reasoning is also important to many different fields, such as child development, economics, and sociology, and could help in the development of more effective treatments for conditions such as autism spectrum disorder,” Williams said.

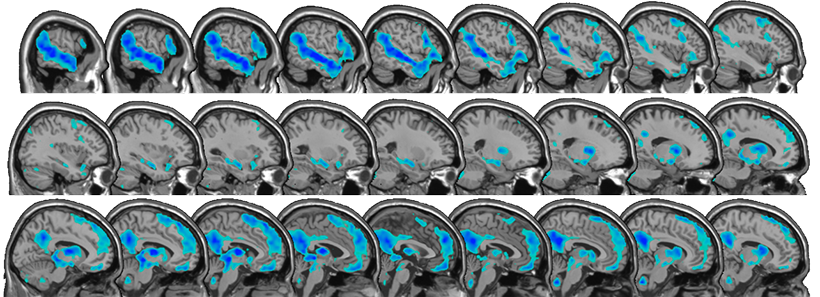

Previous research on the cognitive processes that underlie theory of mind has involved functional MRI studies, where scientists watch which parts of the brain are active as volunteers perform cognitive tasks.

But the imaging studies capture the activity of many thousands of neurons all at once. In contrast, Williams and colleagues recorded the computations of individual neurons. This provided a detailed picture of how neurons encode social information.

“Individual neurons, even within a small area of the brain, are doing very different things, not all of which are involved in social reasoning,” Williams said. “Without delving into the computations of single cells, it’s very hard to build an understanding of the complex cognitive processes underlying human social behavior and how they go awry in mental disorders.”

Adapted from a Mass General news release.