In a new study, MIT neuroscientists have identified parts of the brain’s visual cortex that respond preferentially when you look at “things” — that is, rigid or deformable objects like a bouncing ball. Other brain regions are more activated when looking at “stuff” — liquids or granular substances such as sand.

This distinction, which has never been seen in the brain before, may help the brain plan how to interact with different kinds of physical materials, the researchers say.

“When you’re looking at some fluid or gooey stuff, you engage with it in different way than you do with a rigid object. With a rigid object, you might pick it up or grasp it, whereas with fluid or gooey stuff, you probably are going to have to use a tool to deal with it,” says Nancy Kanwisher, the Walter A. Rosenblith Professor of Cognitive Neuroscience; a member of the McGovern Institute for Brain Research and MIT’s Center for Brains, Minds, and Machines; and the senior author of the study.

MIT postdoc Vivian Paulun, who is joining the faculty of the University of Wisconsin at Madison this fall, is the lead author of the paper, which appears today in the journal Current Biology. RT Pramod, an MIT postdoc, and Josh Tenenbaum, an MIT professor of brain and cognitive sciences, are also authors of the study.

Stuff vs. things

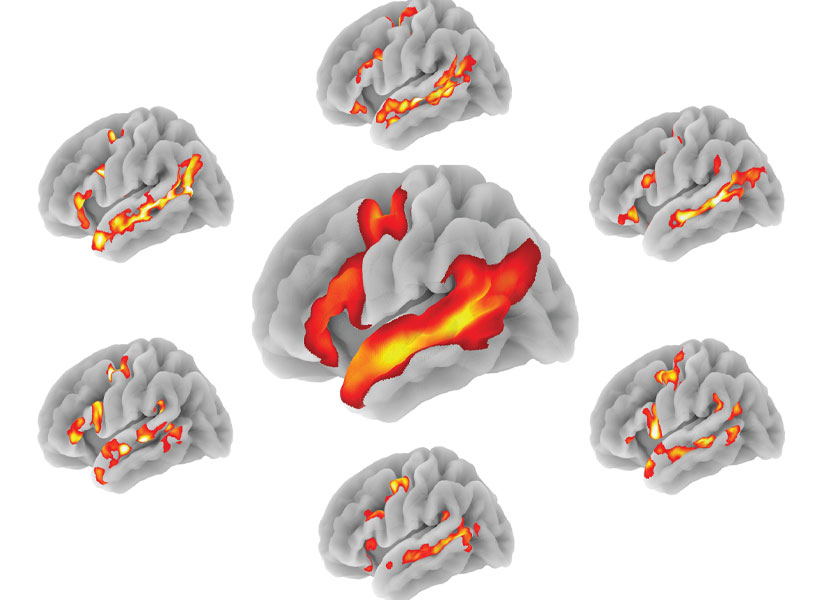

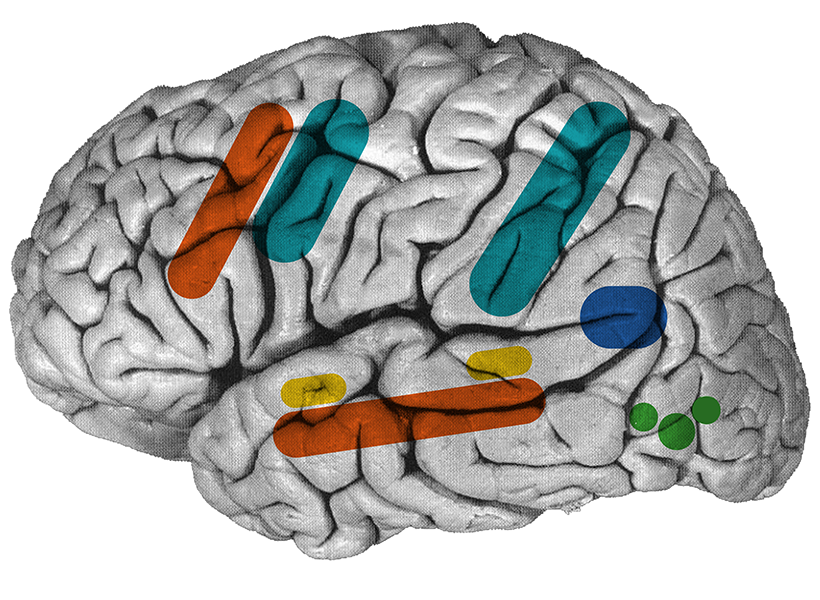

Decades of brain imaging studies, including early work by Kanwisher, have revealed regions in the brain’s ventral visual pathway that are involved in recognizing the shapes of 3D objects, including an area called the lateral occipital complex (LOC). A region in the brain’s dorsal visual pathway, known as the frontoparietal physics network (FPN), analyzes the physical properties of materials, such as mass or stability.

Although scientists have learned a great deal about how these pathways respond to different features of objects, the vast majority of these studies have been done with solid objects, or “things.”

“Nobody has asked how we perceive what we call ‘stuff’ — that is, liquids or sand, honey, water, all sorts of gooey things. And so we decided to study that,” Paulun says.

These gooey materials behave very differently from solids. They flow rather than bounce, and interacting with them usually requires containers and tools such as spoons. The researchers wondered if these physical features might require the brain to devote specialized regions to interpreting them.

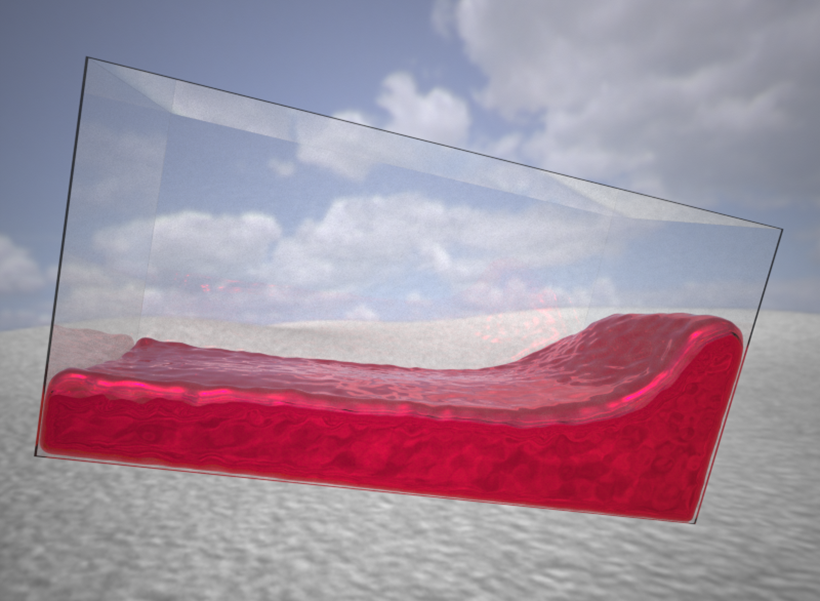

To explore how the brain processes these materials, Paulun used a software program designed for visual effects artists to create more than 100 video clips showing different types of things or stuff interacting with the physical environment. In these videos, the materials could be seen sloshing or tumbling inside a transparent box, being dropped onto another object, or bouncing or flowing down a set of stairs.

The researchers used functional magnetic resonance imaging (fMRI) to scan the visual cortex of people as they watched the videos. They found that both the LOC and the FPN respond to “things” and “stuff,” but that each pathway has distinctive subregions that respond more strongly to one or the other.

“Both the ventral and the dorsal visual pathway seem to have this subdivision, with one part responding more strongly to ‘things,’ and the other responding more strongly to ‘stuff,’” Paulun says. “We haven’t seen this before because nobody has asked that before.”

Roland Fleming, a professor of experimental psychology at Justus Liebig University of Geissen, described the findings as a “major breakthrough in the scientific understanding of how our brains represent the physical properties of our surrounding world.”

“We’ve known the distinction exists for a long time psychologically, but this is the first time that it’s been really mapped onto separate cortical structures in the brain. Now we can investigate the different computations that the distinct brain regions use to process and represent objects and materials,” says Fleming, who was not involved in the study.

Physical interactions

The findings suggest that the brain may have different ways of representing these two categories of material, similar to the artificial physics engines that are used to create video game graphics. These engines usually represent a 3D object as a mesh, while fluids are represented as sets of particles that can be rearranged.

“The interesting hypothesis that we can draw from this is that maybe the brain, similar to artificial game engines, has separate computations for representing and simulating ‘stuff’ and ‘things.’ And that would be something to test in the future,” Paulun says.

The researchers also hypothesize that these regions may have developed to help the brain understand important distinctions that allow it to plan how to interact with the physical world. To further explore this possibility, the researchers plan to study whether the areas involved in processing rigid objects are also active when a brain circuit involved in planning to grasp objects is active.

They also hope to look at whether any of the areas within the FPN correlate with the processing of more specific features of materials, such as the viscosity of liquids or the bounciness of objects. And in the LOC, they plan to study how the brain represents changes in the shape of fluids and deformable substances.

The research was funded by the German Research Foundation, the U.S. National Institutes of Health, and a U.S. National Science Foundation grant to the Center for Brains, Minds, and Machines.