One of the symptoms of schizophrenia is difficulty incorporating new information about the world. This can lead patients to struggle with making decisions and, eventually, to lose touch with reality.

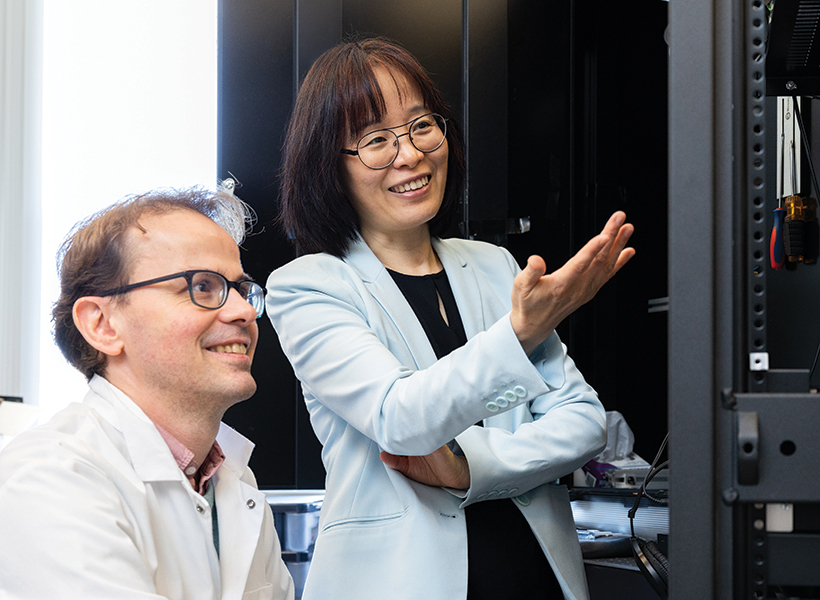

MIT neuroscientists have now identified a gene mutation that appears to give rise to this type of difficulty. In a study of mice, the researchers found that the mutated gene impairs the function of a brain circuit that is responsible for updating beliefs based on new input.

This mutation, in a gene called grin2a, was originally identified in a large-scale screen of patients with schizophrenia. The new study suggests that drugs targeting this brain circuit could help with some of the cognitive impairments seen in schizophrenia patients.

“If this circuit doesn’t work well, you cannot quickly integrate information,” says Guoping Feng, the James W. and Patricia T. Poitras Professor in Brain and Cognitive Sciences at MIT, a member of the Broad Institute of Harvard and MIT, and the associate director of the McGovern Institute for Brain Research at MIT. “We are quite confident this circuit is one of the mechanisms that contributes to the cognitive impairment that is a major part of the pathology of schizophrenia.”

Feng and Michael Halassa, an associate professor of psychiatry and neuroscience at Tufts University, are the senior authors of the new study, which appears today in Nature Neuroscience. Tingting Zhou, a research scientist at the McGovern Institute, and Yi-Yun Ho, a former MIT postdoc, are the lead authors of the paper.

Adapting to new information

Schizophrenia is known to have a strong genetic component. For the general population, the risk of developing the disease is about 1 percent, but that goes up to 10 percent for those who have a parent or sibling with the disease, and 50 percent for people who have an identical twin with the disease.

Researchers at the Stanley Center for Psychiatric Research at the Broad Institute have identified more than 100 gene variants linked to schizophrenia, using genome-wide association studies. However, many of those variants are located in non-coding regions of the genome, making it difficult to figure out how they might influence development of the disease.

More recently, researchers at the Stanley Center used a different strategy, known as whole-exome sequencing, to reveal gene mutations linked to schizophrenia. This technique sequences only the protein-coding regions of the genome, so it can reveal mutations that are located in known genes.

Using this approach on about 25,000 sequences from people with schizophrenia and 100,000 sequences from control subjects, the researchers identified 10 genes in which mutations significantly increase the risk of developing schizophrenia.

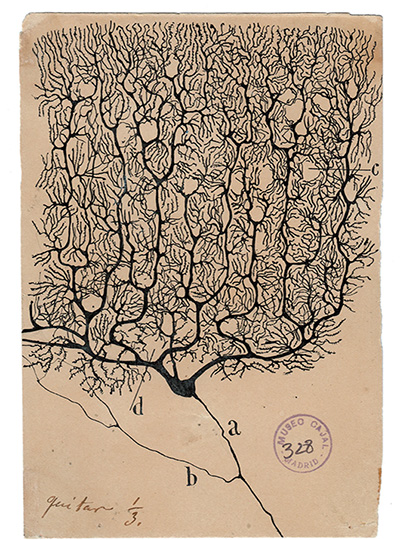

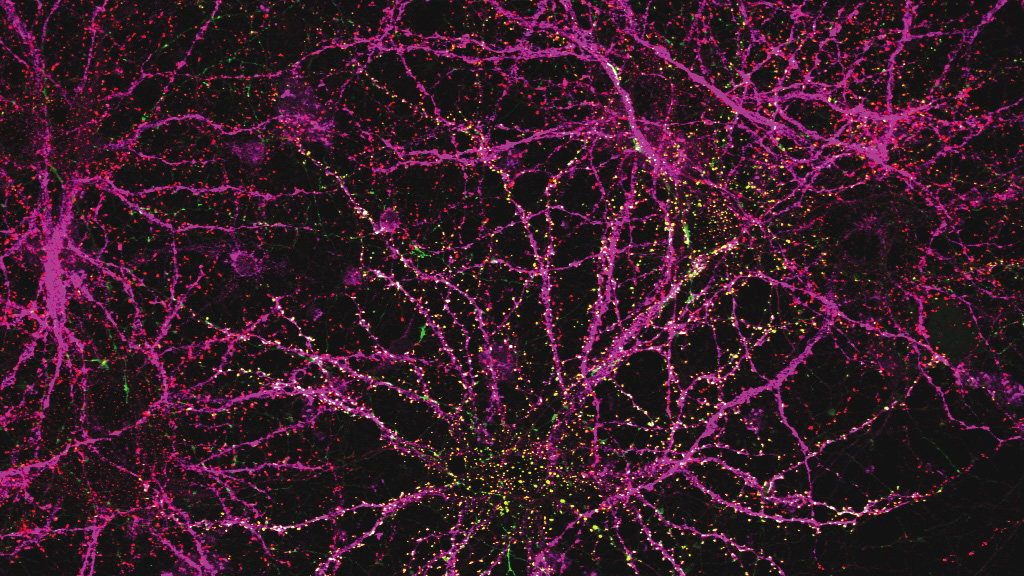

In the new Nature Neuroscience study, Feng and his students created a mouse model with a mutation in one of those genes, grin2a. This gene encodes a protein that forms part of the NMDA receptor — a receptor that is activated by the neurotransmitter glutamate and is often found on the surface of neurons.

Zhou then investigated whether these mice displayed any of the characteristic behaviors seen in schizophrenia patients. These patients show many complex symptoms, including psychoses such as hallucinations and delusions (loss of contact with reality). Those are difficult to study in mice, but it is possible to study related symptoms such as difficulty in interpreting new sensory input.

Over the past two decades, schizophrenia researchers have hypothesized that psychosis may stem from an impaired ability to update beliefs based on new information.

“Our brain can form a prior belief of reality, and when sensory input comes into the brain, a neurotypical brain can use this new input to update the prior belief. This allows us to generate a new belief that’s close to what the reality is,” Zhou says. “What happens in schizophrenia patients is that they weigh too heavily on the prior belief. They don’t use as much current input to update what they believed before, so the new belief is detached from reality.”

To study this, Zhou designed an experiment that required mice to choose between two levers to press to earn a food reward. One lever was low-reward — mice had to push it six times to get one drop of milk. A high-reward lever dispensed three drops per push.

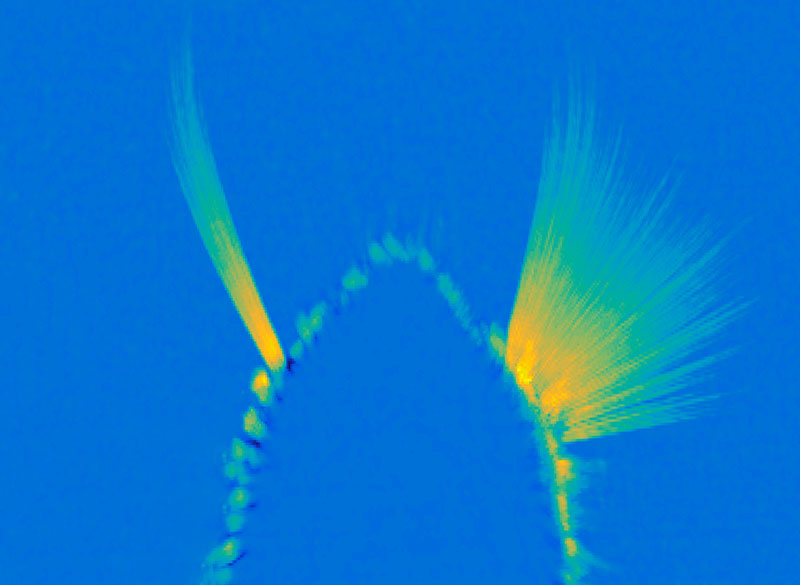

At the beginning of the study, all of the mice learned to prefer the high-reward lever. However, as the experiment went on, the number of presses required to dispense the higher reward gradually went up, while there were no changes to the low-reward lever.

As the effort required went up, healthy mice start to switch back and forth between the two levers. Once they had to press the high-reward lever around 18 times for three drops of milk, making the effort per drop about the same for each lever, they eventually switched permanently to the low-reward lever. However, mice with a mutation in grin2a showed a different behavior pattern. They spent more time switching back and forth between the two levers, and they made the switch to the low-reward side much later.

“We find that neurotypical animals make adaptive decisions in this changing environment,” Zhou says. “They can switch from the high-reward side to the low-reward side around the equal value point, while for the animals with the mutation, the switch happens much later. Their adaptive decision-making is much slower compared to the wild-type animals.”

An impaired circuit

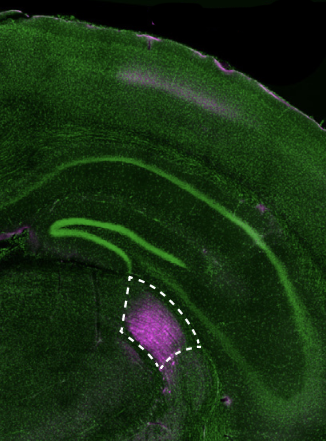

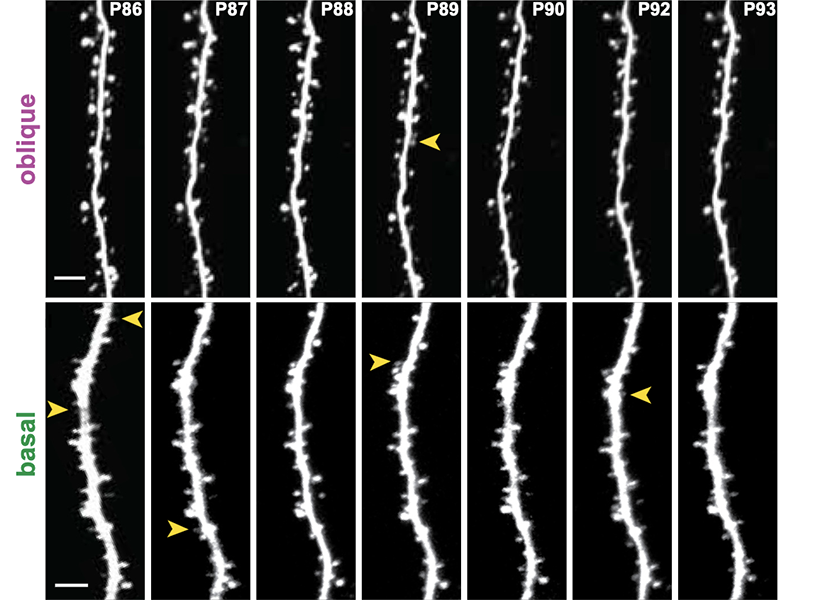

Using functional ultrasound imaging and electrical recordings, the researchers found that the brain region affected most by the grin2a mutation was the mediodorsal thalamus. This part of the brain connects with the prefrontal cortex to form a thalamocortical circuit that is responsible for regulating cognitive functions such as executive control and decision-making.

The researchers found that neuronal activity in the mediodorsal thalamus appears to keep track of the changes in value of the two reward options. Additionally, the mice showed different patterns of neural activity depending on which state they were — either an exploratory state or committed to one side.

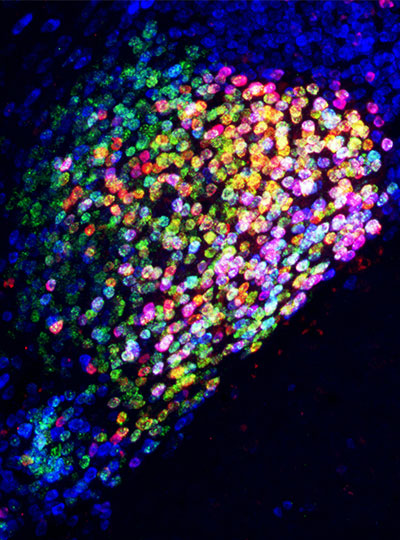

The researchers also showed that they could use optogenetics to reverse the behavioral symptoms of the mice with mutated grin2a. They engineered the neurons of the mediodorsal thalamus so that they could be activated by light, and when these neurons were activated, the mice began behaving similarly to mice without the grin2a mutation.

While only a very small percentage of schizophrenia patients have mutations in the grin2a gene, it’s possible that this circuit dysfunction is a converging mechanism of cognitive impairment for a subset of schizophrenia patients with different causes.

Targeting this circuit could offer a way to overcome some of the cognitive impairments seen in schizophrenia patients, the researchers say. To do that, they are now working on identifying targets within the circuit that could be potentially druggable.

The research was funded by the National Institutes of Mental Health, the Poitras Center for Psychiatric Disorders Research at MIT, the Yang Tan Collective at MIT, the K. Lisa Yang and Hock E. Tan Center for Molecular Therapeutics at MIT, the Stelling Family Research Fund at MIT, the Stanley Center for Psychiatric Research, and the Brain and Behavior Research Foundation.