When patients undergo general anesthesia, doctors can choose among several drugs. Although each of these drugs acts on neurons in different ways, they all lead to the same result: a disruption of the brain’s balance between stability and excitability, according to a new MIT study.

This disruption causes neural activity to become increasingly unstable, until the brain loses consciousness, the researchers found. The discovery of this common mechanism could make it easier to develop new technologies for monitoring patients while they are undergoing anesthesia.

“What’s exciting about that is the possibility of a universal anesthesia-delivery system that can measure this one signal and tell how unconscious you are, regardless of which drugs they’re using in the operating room,” says Earl Miller, the Picower Professor of Neuroscience and a member of MIT’s Picower Institute for Learning and Memory.

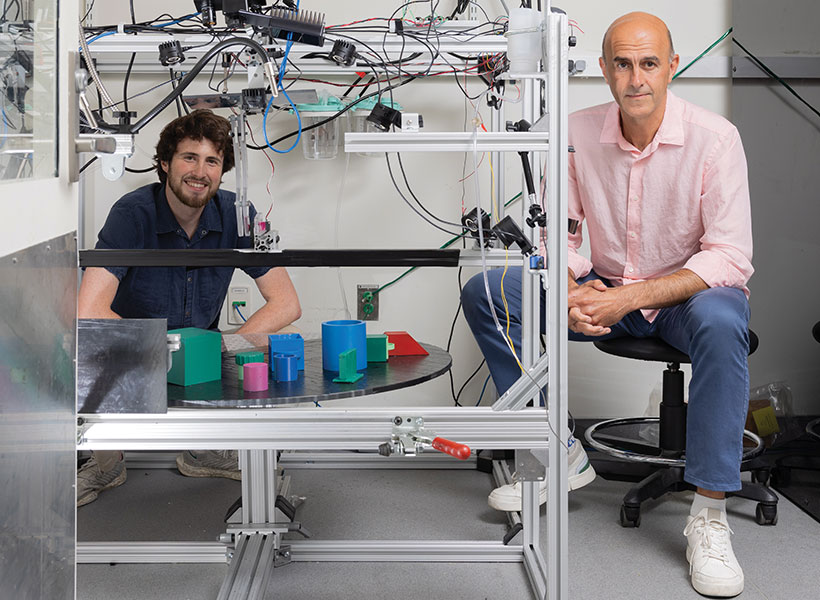

Miller, Edward Hood Taplin Professor of Medical Engineering and Computational Neuroscience Emery Brown, and their colleagues are now working on an automated control system for delivery of anesthesia drugs, which would measure the brain’s stability using EEG and then automatically adjust the drug dose. This could help doctors ensure that patients stay unconscious throughout surgery without becoming too deeply unconscious, which can have negative side effects following the procedure.

Miller and Ila Fiete, a professor of brain and cognitive sciences, the director of the K. Lisa Yang Integrative Computational Neuroscience Center (ICoN), and a member of MIT’s McGovern Institute for Brain Research, are the senior authors of the new study, which appears today in Cell Reports. MIT graduate student Adam Eisen is the paper’s lead author.

Destabilizing the brain

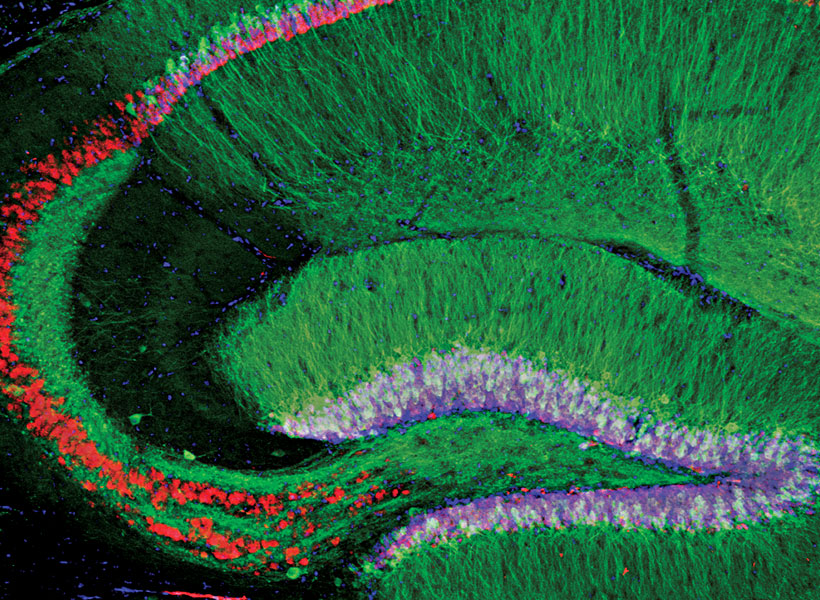

Exactly how anesthesia drugs cause the brain to lose consciousness has been a longstanding question in neuroscience. In 2024, a study from Miller’s and Fiete’s labs suggested that for propofol, the answer is that anesthesia works by disrupting the balance between stability and excitability in the brain.

When someone is awake, their brain is able to maintain this delicate balance, responding to sensory information or other input and then returning to a stable baseline.

“The nervous system has to operate on a knife’s edge in this narrow range of excitability,” Miller says. “It has to be excitable enough so different parts can influence one another, but if it gets too excited it goes off into chaotic activity.”

In that 2024 study, the researchers found that propofol knocks the brain out of this state, known as “dynamic stability.” As doses of the drug increased, the brain took longer and longer to return to its baseline state after responding to new input. This effect became increasingly pronounced until consciousness was lost.

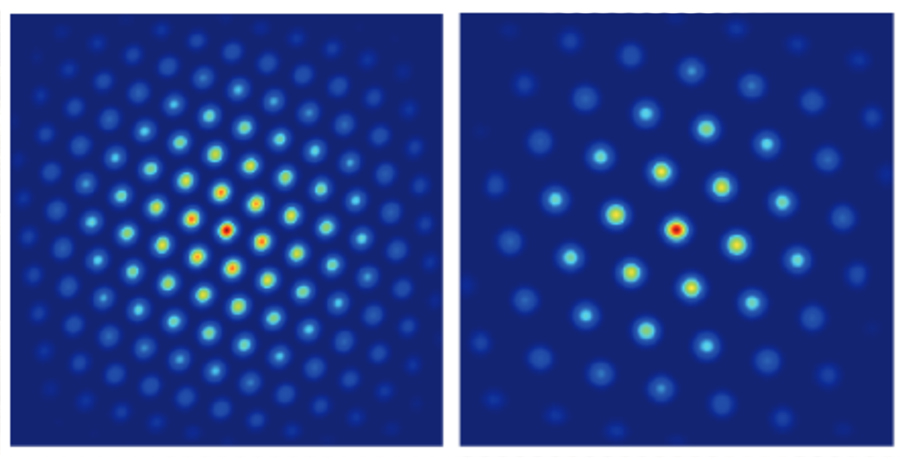

For that study, the researchers devised a computational model that analyzes neural activity recorded from the brain. This technique allowed them to determine how the brain responds to perturbations such as an auditory tone or other sensory input, and how long it takes to return to its baseline stability.

In their new study, the researchers used the same technique to measure how the brain responds to not only propofol but two additional anesthesia drugs — ketamine and dexmedetomidine. Animals were given one of the three drugs while their brain activity was analyzed, including their response to auditory tones.

This study showed that the same destabilization induced by propofol also appears during administration of the other two drugs. This “universal signature” appears even though the three drugs have different molecular mechanisms: propofol binds to GABA receptors, inhibiting neurons that have those receptors; dexmedetomidine blocks the release of norepinephrine; and ketamine blocks NMDA receptors, suppressing neurons with those receptors.

Each of these pathways, the researchers hypothesize, affect the brain’s balance of stability and excitability in different ways, and each leads to an overall destabilization of this balance.

“All three of these drugs appear to do the exact same thing,” Miller says. “In fact, you could look at the destabilization measure we use and you can’t tell which drug is being applied.”

The researchers now plan to further investigate how each of these drugs may give rise to the same patterns of brain destabilization.

“The molecular mechanisms of ketamine and dexmedetomidine are a bit more involved than propofol mechanisms,” Eisen says. “A future direction is to do a meaningful model of what the biophysical effects of those are and see how that could lead to destabilization.”

Monitoring anesthesia

Now that the researchers have shown that three different anesthesia drugs produce similar destabilization patters in the brain, they believe that measuring those patterns could offer a valuable way to monitor patients during anesthesia. While anesthesia is overall a very safe procedure, it does carry some risks, especially for very young children and for people over 65.

For adults suffering from dementia, anesthesia can make the condition worse, and it can also exacerbate neuropsychiatric disorders such as depression. These risks are higher if patients go into a deeper state of unconsciousness known as burst suppression.

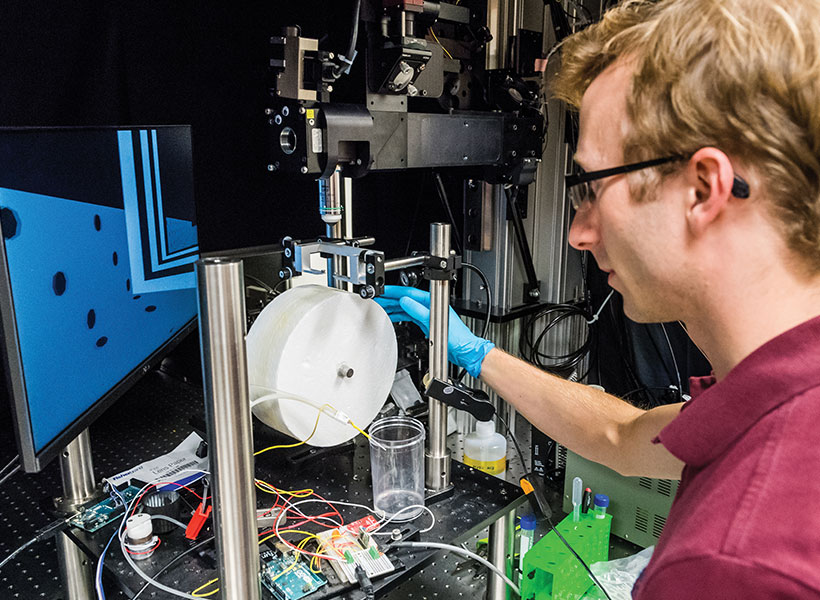

To help reduce those risks, Miller and Brown, who is also an anesthesiologist at MGH, are developing a prototype device that can measure patients’ EEG readings while under anesthesia and adjust their dose accordingly. Currently, doctors monitor patients’ heart rate, blood pressure, and other vital signs during surgery, but these don’t give as accurate a reading of how deeply the patient is unconscious.

“If you can limit people’s exposure to anesthesia, if you give just enough and no more, you can reduce risks across the board,” Miller says.

Working with researchers at Brown University, the MIT team is now planning to run a small clinical trial of their monitoring device with patients undergoing surgery.

The research was funded by the U.S. Office of Naval Research, the National Institute of Mental Health, the Simons Center for the Social Brain, the Freedom Together Foundation, the Picower Institute, the National Science Foundation Computer and Information Science and Engineering Directorate, the Simons Collaboration on the Global Brain, the McGovern Institute, and the National Institutes of Health.