Experience is a powerful teacher—and not every experience has to be our own to help us understand the world. What happens to others is instructive, too. That’s true for humans as well as for other social animals. New research from scientists at the McGovern Institute shows what happens in the brains of monkeys as they integrate their observations of others with knowledge gleaned from their own experience.

“The study shows how you use observation to update your assumptions about the world,” explains McGovern Institute Investigator Mehrdad Jazayeri, who led the research. His team’s findings, published in the January 7 issue of the journal Nature, also help explain why we tend to weigh information gleaned from observation and direct experience differently when we make decisions. Jazayeri is also a professor of brain and cognitive sciences at MIT and an investigator at the Howard Hughes Medical Institute.

“As humans, we do a large part of our learning through observing other people’s experiences and what they go through and what decisions they make,” says Setayesh Radkani, a graduate student in Jazayeri’s lab. For example, she says, if you get sick after eating out, you might wonder if the food at the restaurant was to blame. As you consider whether it’s safe to return, you’ll likely take into account whether the friends you’d dined with got sick too. Your experiences as well as those of your friends will inform your understanding of what happened.

The research team wanted to know how this works: When we make decisions that draw on both direct experience and observation, how does the brain combine the two kinds of evidence? Are the two kinds of information handled differently?

Social experiment

It is hard to tease out the factors that influence social learning. “When you’re trying to compare experiential learning versus observational learning, there are a ton of things that can be different,” Radkani says. For example, people may draw different conclusions about someone else’s experiences than their own, because they know less about that person’s motivations and beliefs. Factors like social status, individual differences, and emotional states can further complicate these situations and be hard to control for, even in a lab.

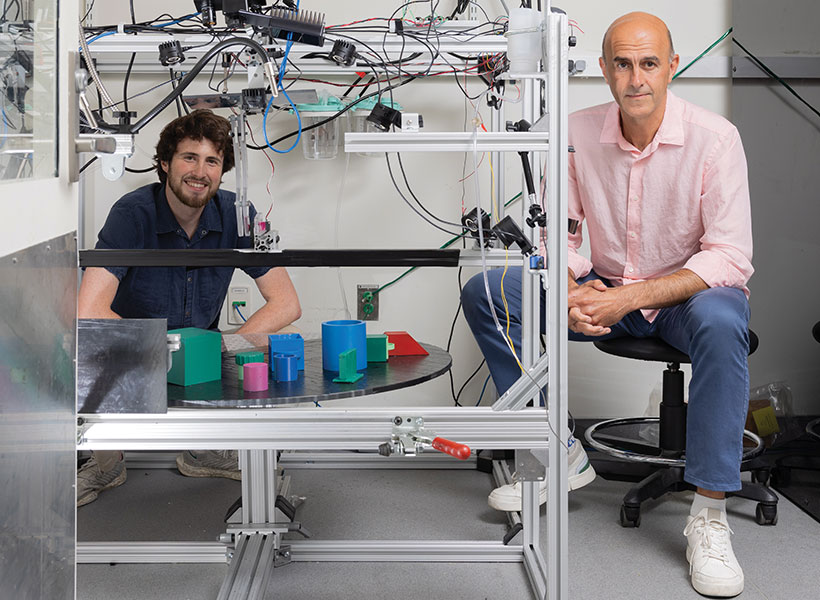

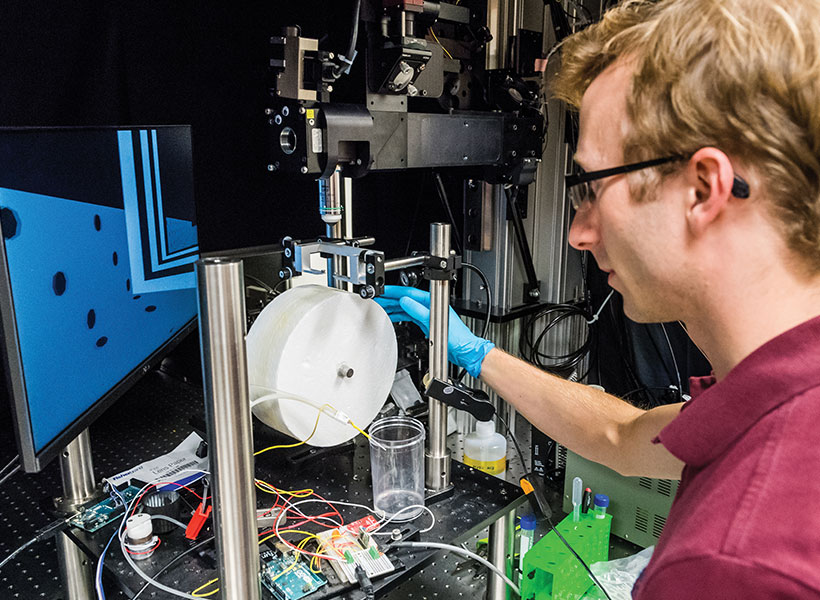

To create a carefully controlled scenario in which they could focus on how observation changes our understanding of the world, Radkani and postdoctoral fellow Michael Yoo devised a computer game that would allow two players to learn from one another through their experiences. They taught this game to both humans and monkeys.

Their approach, Jazayeri says, goes far beyond the kinds of tasks that are typically studied in a neuroscience lab. “I think it might be one of the most sophisticated tasks monkeys have been trained to perform in a lab,” he says.

Both monkeys and humans played the game in pairs. The object was to collect enough tokens to earn a reward. Players could choose to enter either of two virtual arenas to play—but in one of the two arenas, tokens had no value. In that arena, no matter how many tokens a player collected, they could not win. Players were not told which arena was which, and the winnable and unwinnable arenas sometimes swapped without warning.

Only one individual played at a time, but regardless of who was playing, both individuals watched all of the games. So as either player collected tokens and either did or did not receive a reward, both the player and the observer got the same information. They could use that information to decide which arena to choose in their next round.

Experience outweighs observation

Humans and monkeys have sophisticated social intelligence and both clearly took their partners’ experiences into account as they played the game. But the researchers found that the outcomes of a player’s own games had a stronger influence on each individual’s choice of arena than the outcomes of their partner’s games. “They seem to learn less efficiently from observation, suggesting they tend to devalue the observational evidence,” Radkani says. That distinction was reflected in the patterns of neural activity that the team detected in the brains of the monkeys.

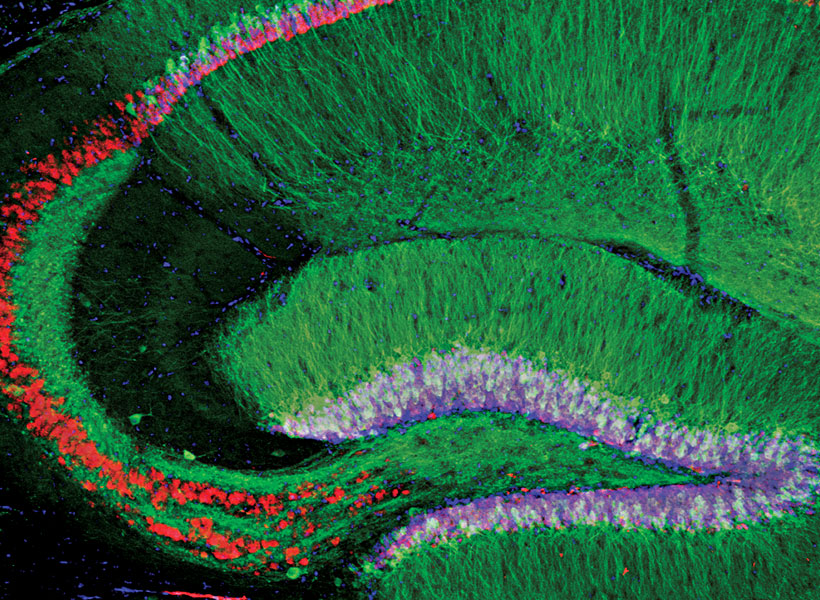

Postdoctoral fellow Ruidong Chen and research assistant Neelima Valluru recorded signals from a part of the brain’s frontal lobe called the anterior cingulate cortex (ACC) as the monkeys played the game. The ACC is known to be involved in social processing. It also integrates information gained through multiple experiences, and seems to use this to update an animal’s beliefs about the world. Prior to the Jazayeri lab’s experiments, this integrative function had only been linked to animals’ direct experiences—not their observations of others.

Consistent with earlier studies, neurons in the ACC changed their activity patterns both when the monkeys played the game and when they watched their partner take a turn. But these signals were complex and variable, making it hard to discern the underlying logic. To tackle this challenge, Chen recorded neural activity from large groups of neurons in both animals across dozens of experiments. “We also had to devise new analysis methods to crack the code and tease out the logic of the computation,” Chen says.

One of the researchers’ central questions was how information about self and other makes its way to the ACC. The team reasoned that there were two possibilities: either the ACC receives a single input on each trial specifying who is acting, or it receives separate input streams for self and other. To test these alternatives, they built artificial neural network models organized both ways and analyzed how well each model matched their neural data. The results suggested that the ACC receives two distinct inputs, one reflecting evidence acquired through direct experience and one reflecting evidence acquired through observation.

The team also found a tantalizing clue about why the brain tends to trust firsthand experiences more than observations. Their analysis showed that the integration process in the ACC was biased toward direct experience. As a result, both humans and monkeys cared more about their own experiences than the experiences of their partner.

Jazayeri says the study paves the way to deeper investigations of how the brain drives social behavior. Now that his team has examined one of the most fundamental features of social learning, they plan to add additional nuance to their studies, potentially exploring how different abilities or the social relationships between animals influence learning.

“Under the broad umbrella of social cognition, this is like step zero,” he says. “But it’s a really important step, because it begins to provide a basis for understanding how the brain represents and uses social information in shaping the mind.”

This research was supported in part by the Yang Tan Collective at MIT.