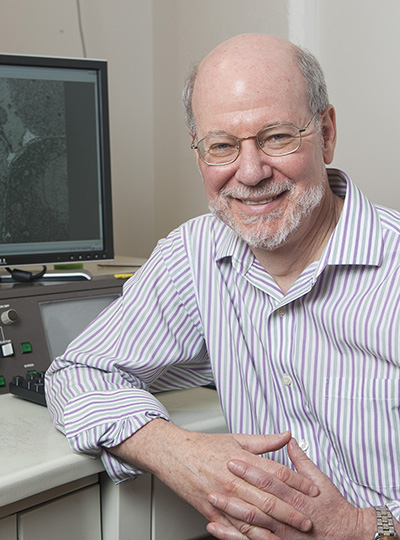

Today, Stanford University neuroscientist Liqun Luo was announced as the recipient of the 2026 Edward M. Scolnick Prize in Neuroscience by the McGovern Institute for Brain Research at MIT. Luo is the Ann and Bill Swindells Professor in the School of Humanities and Sciences, Professor of Biology, and Professor of Neurobiology by courtesy at Stanford University, and a Howard Hughes Medical Institute Investigator. The McGovern Institute presents the Scolnick Prize annually to recognize outstanding achievements in neuroscience.

“Liqun Luo’s development of first-in-kind genetic tools and detailed, innovative experimentation has succeeded in defining rules that govern how transient cell-cell contacts ultimately establish functional neural circuits in the developing brain,” says McGovern Institute Director Robert Desimone, who is also chair of the selection committee. “Luo’s methodologies for visualizing specific subsets of neurons based on their developmental trajectory or their activity are widely used in the field and have driven the identification of neurons responsible for a range of behaviors, including sleep and social interactions.”

Liqun Luo was born in Shanghai, China and attained his bachelor’s degree in molecular biology from the University of Science and Technology of China in 1986. He moved to the US for graduate studies at Brandeis University with Kalpana White, where he characterized the homolog of the Alzheimer’s amyloid precursor protein in the fruit fly Drosophila. After receiving a PhD in 1992, he moved to the University of California, San Francisco for postdoctoral training with Lily Jan and Yuh-Nung Jan where he published a number of papers about how small GTPase proteins regulate cellular morphology. Luo descends from a line of mentors trained by his scientific hero Seymour Benzer, who is widely known for founding the field of neurogenetics.

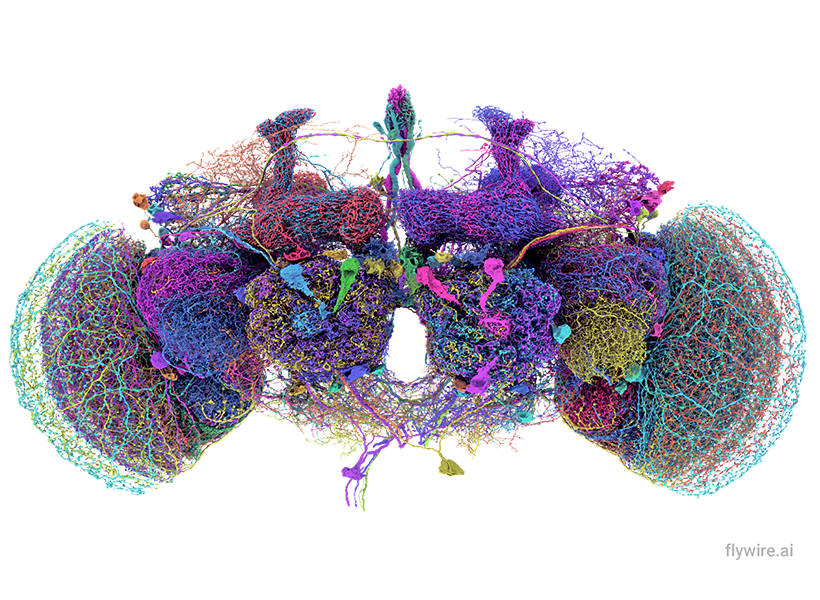

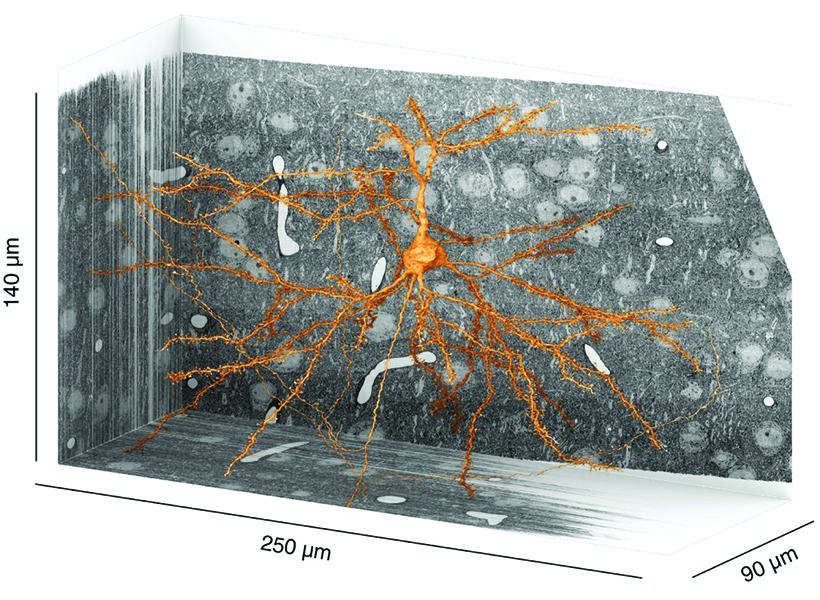

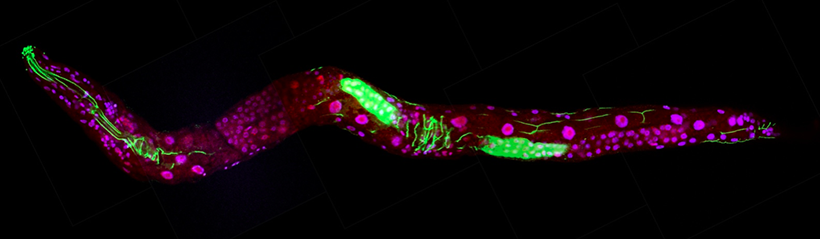

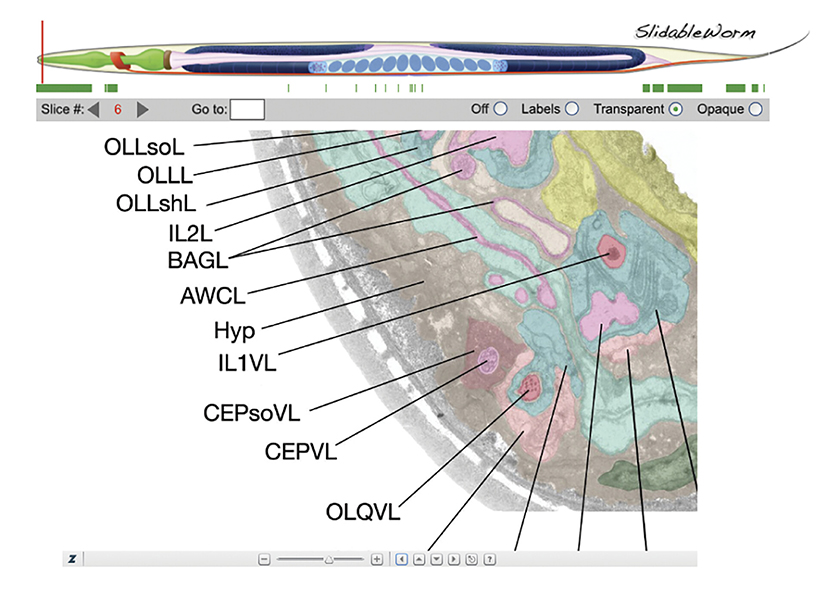

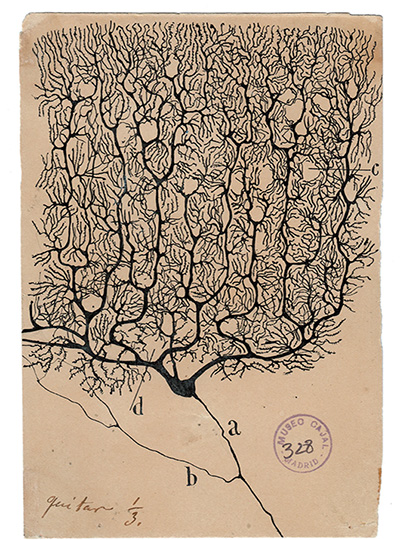

In 1996, Luo joined the faculty at Stanford University and established his own research group to focus on the molecular mechanisms of neuronal morphogenesis in the brain. Luo’s laboratory developed groundbreaking techniques—including Mosaic Analysis with a Repressible Cell Marker (MARCM) in fruit flies and Mosaic Analysis with Double Markers (MADM) in mice—that allowed the labeling and genetic manipulation of individual neurons within otherwise normal brains. These innovations gave researchers the ability to image genetically defined and altered neurons as they grow, connect, and change over time. Luo and his colleagues used these tools to reveal how neurons sculpt their branching structures, prune away unnecessary connections, and find the precise partners they need to form functional circuits. His work illuminated the molecular choreography that ensures each neuron wires into the correct network—an essential step in building circuits for sensation, movement, memory, and emotion. Another impactful innovation from Luo’s group, known as TRAP (Targeted Recombination in Active Populations), allows for the genetic tagging of neurons that are active during specific experiences. This technique has helped reveal how neural populations encode thirst, motivation, and long-term memories.

Most recently, Luo and his group have wholly defined the molecular codes that neurons use to recognize their correct partners in the olfactory system of fruit flies. His research demonstrated that a combinatorial pattern of cell-surface proteins precisely guides neurons to connect to one another and form a functional network. His team then succeeded in genetically altering the molecular cues that govern synaptic connections to rewire a neural circuit and produce a predicted change in the fly’s mating behavior.

Colleagues emphasize that Luo’s influence extends far beyond his own discoveries. Many of the molecular principles he has uncovered in simple model organisms have since proven to be conserved across species, underscoring their fundamental importance. His genetic tracing methods have been adopted by laboratories worldwide and applied not only in neuroscience but also in fields such as cancer biology, where tracing cell lineage is critical. He has also trained a generation of neuroscientists who have gone on to lead major research programs of their own, amplifying his impact across the field.

Luo has received numerous honors, including election to the National Academy of Sciences, the NAS Award in the Neurosciences, the Pradel Research Award, and the Society for Neuroscience’s Award for Education in Neuroscience. He has been a Howard Hughes Medical Institute Investigator since 2005. He is also the author of Principles of Neurobiology, a widely used textbook that has been translated into Chinese, Japanese, and Italian.

The Scolnick Prize recognizes discoveries that advance the understanding of the brain and its disorders. Luo’s work exemplifies this mission, providing tools and conceptual frameworks for understanding how neural circuits form and are refined to become functional, and how mutations disrupt these processes. As neuroscience enters an era defined by increasingly precise control over brain circuits, Liqun Luo’s contributions stand as both enabling and visionary.

The McGovern Institute will award the Scolnick Prize to Luo on June 16, 2026. At 4:00 pm he will deliver a lecture titled “Wiring Specificity of Neural Circuits” to be followed by a reception at the McGovern Institute, 43 Vassar Street (building 46, room 3002) in Cambridge. The event is free and open to the public.