Using a new technique that can create vacancies at any site across a material and then shrink it to about 1/2,000 of its original volume, MIT researchers have designed nanotechnology devices that could be used for optical computing and other applications involving the manipulation of visible light.

The new fabrication technique, known as “implosion carving,” allows researchers to imprint features throughout a hydrogel using photopatterning. If patterned with a resolution of about 800 nanometers, these features can then be shrunk to less than 100 nanometers.

Because that resolution is smaller than the wavelength of light, the devices can bend light in specific ways that allow them to perform optical computations.

“In order to enable nanophotonic applications in visible light, we need to make nanostructures with feature sizes with a resolution less than 100 nanometers. Only in that way can we precisely create the structure that can manipulate visible light,” says Quansan Yang, a former MIT postdoc, now an assistant professor at the University of Washington, and one of the lead authors of the new study.

In their paper, the researchers demonstrated a photonic device that can perform a simple digit-classification task, but future versions could be used for high-speed imaging and information processing, they say.

Gaojie Yang, a former MIT postdoc, is the co-lead author of the paper, which appears today in Nature Photonics. The paper’s senior authors are Peter So, director of the MIT Laser Biomedical Research Center (LBCR) and an MIT professor of biological engineering and mechanical engineering, and Edward Boyden, the Y. Eva Tan Professor in Neurotechnology at MIT and a professor of biological engineering, media arts and sciences, and brain and cognitive sciences. Boyden is also a Howard Hughes Medical Institute investigator and a member of MIT’s McGovern Institute for Brain Research, the Yang Tan Collective, and Koch Institute for Integrative Cancer Research.

Nanoscale feature sizes

Photonic devices, which transmit and manipulate light, hold potential for use as optical computer chips that could offer an energy-efficient alternative to semiconductor chips. However, existing techniques for creating 3D photonic devices haven’t yet achieved the 100-nanometer resolution that is needed to channel visible light, which has wavelengths between 380 and 750 nanometers.

Using an additive manufacturing technique called two-photon lithography, researchers can use light to create 3D nanoscale features, but with a resolution larger than 100 nanometers. Another technique, known as electron-beam lithography, can be used to etch smaller-resolution features onto a silicon chip, but it doesn’t generate 3D structures.

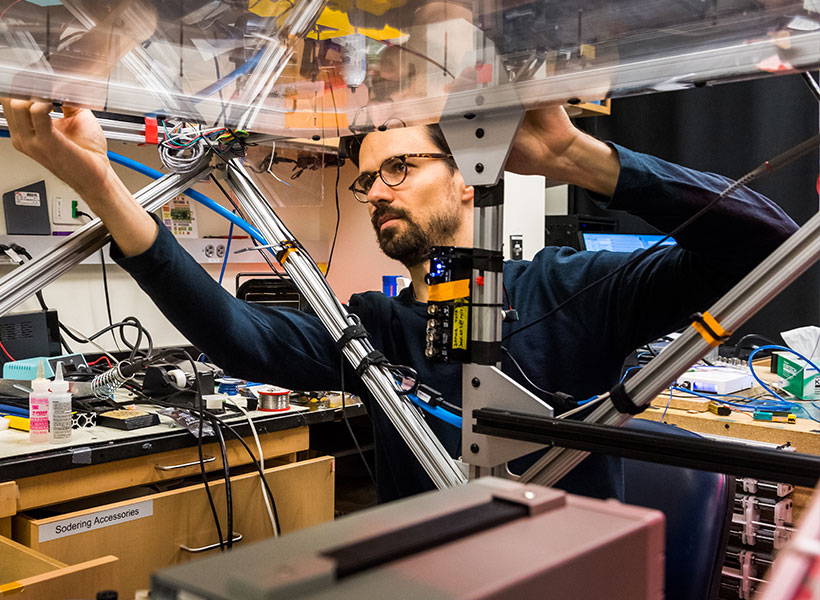

To make 3D devices with the necessary feature size, the researchers extended the concept of “implosion fabrication,” which Boyden’s lab developed in 2018, to create a new variant called “implosion carving.” In implosion carving, a laser creates vacancies — tiny voids where the hydrogel material has been removed — at precisely targeted locations. These vacancies exhibit different optical properties than the surrounding hydrogel. The hydrogel is then shrunk to bring the patterned features down to the nanoscale.

The carving process begins with immersing the hydrogel in a photosensitizing dye. Then, the researchers use a laser to excite the photosensitizer at specific places in the gel, which in turn generates reactive oxygen species that cut the bonds holding the hydrogel together. This creates a vacancy in that spot.

Once the desired vacancy pattern has been carved into the hydrogel, the researchers shrink it using a two-step process. First, they soak it in a solution containing ions, which causes it to shrink about tenfold in each dimension. To shrink it a little more, and to remove the watery solution, the hydrogel then undergoes a process called supercritical drying, which can remove liquid from a gel without damaging it.

At the end of the process, the hydrogel has been shrunk more than tenfold in each dimension, leading to a 2,000-fold reduction in volume.

Computing with light

To demonstrate the versatility of this technique, the researchers used it to create several 3D shapes, including a helix and a structure inspired by a butterfly wing. Some of these structures are too thin, and have too high an aspect ratio, to be stably created using conventional two-photon lithography.

The researchers also created a device that could perform a simple calculation known as digit classification, a task that is traditionally used to test the performance of neural networks. During this task, the device was presented with a digit, such as 1 or 5, and had to light up a specific location to indicate which number was detected.

To achieve this, the researchers patterned vacancies throughout the device so that it would act like a neural network. The pattern of vacancies would diffract input light as it passed through many layers of patterned hydrogel, so that the output light was determined by the shape of the digit that was entered into the system.

“This is a purely optical system that effectively performs optical computing,” So says.

“One of the very attractive features of this technology is that you can manipulate the property of the material at every tiny location,” says Dushan Wadduwage, an assistant professor at Old Dominion University and former MIT postdoc, who is also an author of the paper. “You have millions of different locations that you need to decide the property of, and that turns into a really interesting design problem where we can use deep-learning algorithms to find designs over these millions of parameters and come up with parts that go into optical systems in new ways.”

The researchers now plan to use the same principles to build optical devices that could classify cells based on their state as they flow through a microfluidic device. This could help identify rare cells such as circulating tumor cells in a blood sample, they say.

This approach could also enable the creation of high-throughput imaging techniques for applications such as analyzing tissue samples from biopsies or surgical specimens. And, if adapted to work with other materials such as hydrophobic polymers, it could also be used to create channels within 3D nanofluidic devices.

Other authors of the paper include Gaojie Yang, Takahiro Nambara, Hiroyuki Kusaka, Yuichiro Kunai, Alex Matlock, Corban Swain, Brett Pryor, Yannick Salamin, Daniel Oran, Hasindu Kariyawasam, Ramith Hettiarachchi, and Marin Soljacic.

The research was funded, in part, by the MIT-Fujikura Partnership Fund, the U.S. Army Research Office through the Institute for Soldier Nanotechnologies at MIT, Lisa Yang and Y. Eva Tan, John Doerr, the Open Philanthropy Project, the Howard Hughes Medical Institute, and the U.S. National Institutes of Health.