As part of our Ask the Brain series, we received the question, “Do thoughts have mass?” The following is a guest blog post by Michal De-Medonsa, technical associate and manager of the Jazayeri lab, who tapped into her background in philosophy to answer this intriguing question.

_____

To answer the question, “Do thoughts have mass?” we must, like any good philosopher, define something that already has a definition – “thoughts.”

Logically, we can assert that thoughts are either metaphysical or physical (beyond that, we run out of options). If our definition of thought is metaphysical, it is safe to say that metaphysical thoughts do not have mass since they are by definition not physical, and mass is a property of a physical things. However, if we define a thought as a physical thing, it becomes a little trickier to determine whether or not it has mass.

A physical definition of thoughts falls into (at least) two subgroups – physical processes and physical parts. Take driving a car, for example – a parts definition describes the doors, motor, etc. and has mass. A process definition of a car being driven, turning the wheel, moving from point A to point B, etc. does not have mass. The process of driving is a physical process that involves moving physical matter, but we wouldn’t say that the act of driving has mass. The car itself, however, is an example of physical matter, and as any cyclist in the city of Boston is well aware – cars have mass. It’s clear that if we define a thought as a process, it does not have mass, and if we define a thought as physical parts, it does have mass – so, which one is it? In order to resolve our issue, we have to be incredibly precise with our definition. Is a thought a process or parts? That is, is a thought more like driving or more like a car?

In order to resolve our issue, we have to be incredibly precise with our definition of the word thought.

Both physical definitions (process and parts) have merit. For a parts definition, we can look at what is required for a thought – neurons, electrical signals, and neurochemicals, etc. This type of definition becomes quite imprecise and limiting. It doesn’t seem too problematic to say that the neurons, neurochemicals, etc. are themselves the thought, but this style of definition starts to fall apart when we try to include all the parts involved (e.g. blood flow, connective tissue, outside stimuli). When we look at a face, the stimuli received by the visual cortex is part of the thought – is the face part of a thought? When we look at our phone, is the phone itself part of a thought? A parts definition either needs an arbitrary limit, or we end up having to include all possible parts involved in the thought, ending up with an incredibly convoluted and effectively useless definition.

A process definition is more versatile and precise, and it allows us to include all the physical parts in a more elegant way. We can now say that all the moving parts are included in the process without saying that they themselves are the thought. That is, we can say blood flow is included in the process without saying that blood flow itself is part of the thought. It doesn’t sound ridiculous to say that a phone is part of the thought process. If we subscribe to the parts definition, however, we’re forced to say that part of the mass of a thought comes from the mass of a phone. A process definition allows us to be precise without being convoluted, and allows us to include outside influences without committing to absurd definitions.

Typical of a philosophical endeavor, we’re left with more questions and no simple answer. However, we can walk away with three conclusions.

- A process definition of “thought” allows for elegance and the involvement of factors outside the “vacuum” of our physical body, however, we lose out on some function by not describing a thought by its physical parts.

- The colloquial definition of “thought” breaks down once we invite a philosopher over to break it down, but this is to be expected – when we try to break something down, sometimes, it will break down. What we should be aware of is that if we want to use the word in a rigorous scientific framework, we need a rigorous scientific definition.

- Most importantly, it’s clear that we need to put a lot of work into defining exactly what we mean by “thought” – a job well suited to a scientifically-informed philosopher.

Michal De-Medonsa earned her bachelor’s degree in neuroscience and philosophy from Johns Hopkins University in 2012 and went on to receive her master’s degree in history and philosophy of science at the University of Pittsburgh in 2015. She joined the Jazayeri lab in 2018 as a lab manager/technician and spends most of her free time rock climbing, doing standup comedy, and woodworking at the MIT Hobby Shop.

_____

Do you have a question for The Brain? Ask it here.

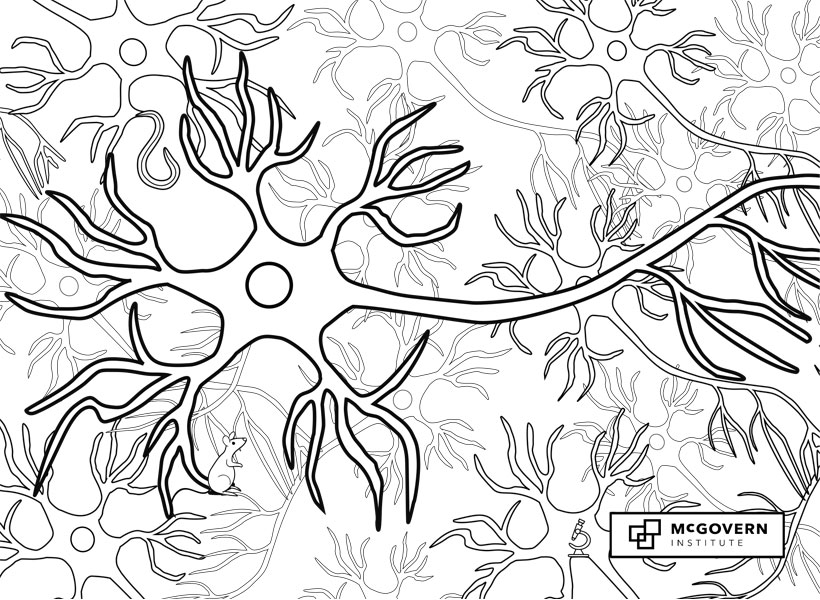

Signals flow through the nervous system from one neuron to the next across synapses.

Signals flow through the nervous system from one neuron to the next across synapses. The axon is the long, thin neural cable that carries electrical impulses called action potentials from the soma to synaptic terminals at downstream neurons.

The axon is the long, thin neural cable that carries electrical impulses called action potentials from the soma to synaptic terminals at downstream neurons. The soma, or cell body, is the control center of the neuron, where the nucleus is located.

The soma, or cell body, is the control center of the neuron, where the nucleus is located. Long branching neuronal processes called dendrites receive synaptic inputs from thousands of other neurons and carry those signals to the cell body.

Long branching neuronal processes called dendrites receive synaptic inputs from thousands of other neurons and carry those signals to the cell body.