More than one million Americans are diagnosed with a chronic brain disorder each year, yet effective treatments for most complex brain disorders are inadequate or even nonexistent.

A major new research effort at MIT’s McGovern Institute aims to change how we treat brain disorders by developing innovative molecular tools that precisely target dysfunctional genetic, molecular, and circuit pathways.

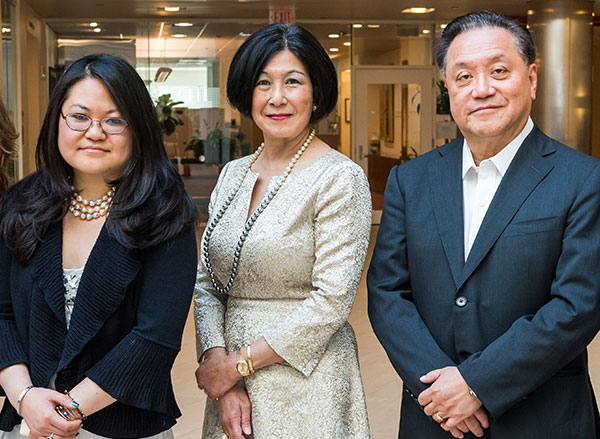

The K. Lisa Yang and Hock E. Tan Center for Molecular Therapeutics in Neuroscience was established at MIT through a $28 million gift from philanthropist Lisa Yang and MIT alumnus Hock Tan ’75. Yang is a former investment banker who has devoted much of her time to advocacy for individuals with disabilities and autism spectrum disorders. Tan is President and CEO of Broadcom, a global technology infrastructure company. This latest gift brings Yang and Tan’s total philanthropy to MIT to more than $72 million.

“In the best MIT spirit, Lisa and Hock have always focused their generosity on insights that lead to real impact,” says MIT President L. Rafael Reif. “Scientifically, we stand at a moment when the tools and insights to make progress against major brain disorders are finally within reach. By accelerating the development of promising treatments, the new center opens the door to a hopeful new future for all those who suffer from these disorders and those who love them. I am deeply grateful to Lisa and Hock for making MIT the home of this pivotal research.”

Engineering with precision

Research at the K. Lisa Yang and Hock E. Tan Center for Molecular Therapeutics in Neuroscience will initially focus on three major lines of investigation: genetic engineering using CRISPR tools, delivery of genetic and molecular cargo across the blood-brain barrier, and the translation of basic research into the clinical setting. The center will serve as a hub for researchers with backgrounds ranging from biological engineering and genetics to computer science and medicine.

“Developing the next generation of molecular therapeutics demands collaboration among researchers with diverse backgrounds,” says Robert Desimone, McGovern Institute Director and Doris and Don Berkey Professor of Neuroscience at MIT. “I am confident that the multidisciplinary expertise convened by this center will revolutionize how we improve our health and fight disease in the coming decade. Although our initial focus will be on the brain and its relationship to the body, many of the new therapies could have other health applications.”

There are an estimated 19,000 to 22,000 genes in the human genome and a third of those genes are active in the brain–the highest proportion of genes expressed in any part of the body.

Variations in genetic code have been linked to many complex brain disorders, including depression and Parkinson’s. Emerging genetic technologies, such as the CRISPR gene editing platform pioneered by McGovern Investigator Feng Zhang, hold great potential in both targeting and fixing these errant genes. But the safe and effective delivery of this genetic cargo to the brain remains a challenge.

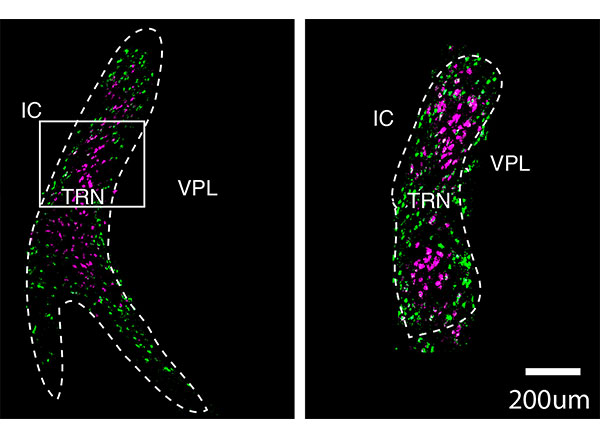

Researchers within the new Yang-Tan Center will improve and fine-tune CRISPR gene therapies and develop innovative ways of delivering gene therapy cargo into the brain and other organs. In addition, the center will leverage newly developed single cell analysis technologies that are revealing cellular targets for modulating brain functions with unprecedented precision, opening the door for noninvasive neuromodulation as well as the development of medicines. The center will also focus on developing novel engineering approaches to delivering small molecules and proteins from the bloodstream into the brain. Desimone will direct the center and some of the initial research initiatives will be led by Associate Professor of Materials Science and Engineering Polina Anikeeva; Ed Boyden, the Y. Eva Tan Professor in Neurotechnology at MIT; Guoping Feng, the James W. (1963) and Patricia T. Poitras Professor of Brain and Cognitive Sciences at MIT; and Feng Zhang, James and Patricia Poitras Professor of Neuroscience at MIT.

Building a research hub

“My goal in creating this center is to cement the Cambridge and Boston region as the global epicenter of next-generation therapeutics research. The novel ideas I have seen undertaken at MIT’s McGovern Institute and Broad Institute of MIT and Harvard leave no doubt in my mind that major therapeutic breakthroughs for mental illness, neurodegenerative disease, autism and epilepsy are just around the corner,” says Yang.

Center funding will also be earmarked to create the Y. Eva Tan Fellows program, named for Tan and Yang’s daughter Eva, which will support fellowships for young neuroscientists and engineers eager to design revolutionary treatments for human diseases.

“We want to build a strong pipeline for tomorrow’s scientists and neuroengineers,” explains Hock Tan. “We depend on the next generation of bright young minds to help improve the lives of people suffering from chronic illnesses, and I can think of no better place to provide the very best education and training than MIT.”

The molecular therapeutics center is the second research center established by Yang and Tan at MIT. In 2017, they launched the Hock E. Tan and K. Lisa Yang Center for Autism Research, and, two years later, they created a sister center at Harvard Medical School, with the unique strengths of each institution converging toward a shared goal: understanding the basic biology of autism and how genetic and environmental influences converge to give rise to the condition, then translating those insights into novel treatment approaches.

All tools developed at the molecular therapeutics center will be shared globally with academic and clinical researchers with the goal of bringing one or more novel molecular tools to human clinical trials by 2025.

“We are hopeful that our centers, located in the heart of the Cambridge-Boston biotech ecosystem, will spur further innovation and fuel critical new insights to our understanding of health and disease,” says Yang.